Percolate queries are also known as Persistent queries, Prospective search, document routing, search in reverse and inverse search.

The normal way of doing searches is to store documents and perform search queries against them. However there are cases when we want to apply a query to an incoming new document to signal the matching. There are some scenarios where this is wanted. For example a monitoring system doesn't just collect data, but it's also desired to notify user on different events. That can be reaching some threshold for a metric or a certain value that appears in the monitored data. Another similar case is news aggregation. You can notify the user about any fresh news, but the user might want to be notified only about certain categories or topics. Going further, they might be only interested about certain "keywords".

This is where a traditional search is not a good fit, since would assume performed the desired search over the entire collection, which gets multiplied by the number of users and we end up with lots of queries running over the entire collection, which can put a lot of extra load. The idea explained in this section is to store instead the queries and test them against an incoming new document or a batch of documents.

Google Alerts, AlertHN, Bloomberg Terminal and other systems that let their users to subscribe to something use a similar technology.

- See percolate about how to create a PQ table.

- See Adding rules to a percolate table to learn how to add percolate rules (as known as PQ rules). Here let's just give a quick example.

The key thing you need to remember about percolate queries is that you already have your search queries in the table. What you need to provide is documents to check if any of them match any of the stored rules.

You can perform a percolate query via SQL or JSON interfaces as well as using programming language clients. The SQL way gives more flexibility while via the HTTP it's simpler and gives all you mostly need. The below table can help you understand the differences.

| Desired behaviour | SQL | HTTP | PHP |

|---|---|---|---|

| Provide a single document | CALL PQ('tbl', '{doc1}') |

query.percolate.document{doc1} |

$client->pq()->search([$percolate]) |

| Provide a single document (alternative) | CALL PQ('tbl', 'doc1', 0 as docs_json) |

- | |

| Provide multiple documents | CALL PQ('tbl', ('doc1', 'doc2'), 0 as docs_json) |

query.percolate.documents[{doc1}, {doc2}] |

$client->pq()->search([$percolate]) |

| Provide multiple documents (alternative) | CALL PQ('tbl', ('{doc1}', '{doc2}')) |

- | - |

| Provide multiple documents (alternative) | CALL PQ('tbl', '[{doc1}, {doc2}]') |

- | - |

| Return matching document ids | 0/1 as docs (disabled by default) | Enabled by default | Enabled by default |

| Use document's own id to show in the result | 'id field' as docs_id (disabled by default) | Not available | Not available |

| Consider input documents are JSON | 1 as docs_json (1 by default) | Enabled by default | Enabled by default |

| Consider input documents are plain text | 0 as docs_json (1 by default) | Not available | Not available |

| Sparsed distribution mode | default | default | default |

| Sharded distribution mode | sharded as mode | Not available | Not available |

| Return all info about matching query | 1 as query (0 by default) | Enabled by default | Enabled by default |

| Skip invalid JSON | 1 as skip_bad_json (0 by default) | Not available | Not available |

| Extended info in SHOW META | 1 as verbose (0 by default) | Not available | Not available |

| Define the number which will be added to document ids if no docs_id fields provided (makes sense mostly in distributed PQ modes) | 1 as shift (0 by default) | Not available | Not available |

To demonstrate how it works here are few examples. Let's create a PQ table with 2 fields:

- title (text)

- color (string)

and 3 rules in it:

- Just full-text. Query:

@title bag - Full-text and filtering. Query:

@title shoes. Filters:color='red' - Full-text and more complex filtering. Query:

@title shoes. Filters:color IN('blue', 'green')

- SQL

- JSON

- PHP

- Python

- javascript

- Java

CREATE TABLE products(title text, color string) type='pq';

INSERT INTO products(query) values('@title bag');

INSERT INTO products(query,filters) values('@title shoes', 'color=\'red\'');

INSERT INTO products(query,filters) values('@title shoes', 'color in (\'blue\', \'green\')');

select * from products;PUT /pq/products/doc/

{

"query": {

"match": {

"title": "bag"

}

},

"filters": ""

}

PUT /pq/products/doc/

{

"query": {

"match": {

"title": "shoes"

}

},

"filters": "color='red'"

}

PUT /pq/products/doc/

{

"query": {

"match": {

"title": "shoes"

}

},

"filters": "color IN ('blue', 'green')"

}$index = [

'index' => 'products',

'body' => [

'columns' => [

'title' => ['type' => 'text'],

'color' => ['type' => 'string']

],

'settings' => [

'type' => 'pq'

]

]

];

$client->indices()->create($index);

$query = [

'index' => 'products',

'body' => [ 'query'=>['match'=>['title'=>'bag']]]

];

$client->pq()->doc($query);

$query = [

'index' => 'products',

'body' => [ 'query'=>['match'=>['title'=>'shoes']],'filters'=>"color='red'"]

];

$client->pq()->doc($query);

$query = [

'index' => 'products',

'body' => [ 'query'=>['match'=>['title'=>'shoes']],'filters'=>"color IN ('blue', 'green')"]

];

$client->pq()->doc($query);utilsApi.sql('create table products(title text, color string) type=\'pq\'')

indexApi.insert({"index" : "products", "doc" : {"query" : "@title bag" }})

indexApi.insert({"index" : "products", "doc" : {"query" : "@title shoes", "filters": "color='red'" }})

indexApi.insert({"index" : "products", "doc" : {"query" : "@title shoes","filters": "color IN ('blue', 'green')" }})res = await utilsApi.sql('create table products(title text, color string) type=\'pq\'');

res = indexApi.insert({"index" : "products", "doc" : {"query" : "@title bag" }});

res = indexApi.insert({"index" : "products", "doc" : {"query" : "@title shoes", "filters": "color='red'" }});

res = indexApi.insert({"index" : "products", "doc" : {"query" : "@title shoes","filters": "color IN ('blue', 'green')" }});utilsApi.sql("create table products(title text, color string) type='pq'");

doc = new HashMap<String,Object>(){{

put("query", "@title bag");

}};

newdoc = new InsertDocumentRequest();

newdoc.index("products").setDoc(doc);

indexApi.insert(newdoc);

doc = new HashMap<String,Object>(){{

put("query", "@title shoes");

put("filters", "color='red'");

}};

newdoc = new InsertDocumentRequest();

newdoc.index("products").setDoc(doc);

indexApi.insert(newdoc);

doc = new HashMap<String,Object>(){{

put("query", "@title shoes");

put("filters", "color IN ('blue', 'green')");

}};

newdoc = new InsertDocumentRequest();

newdoc.index("products").setDoc(doc);

indexApi.insert(newdoc);+---------------------+--------------+------+---------------------------+

| id | query | tags | filters |

+---------------------+--------------+------+---------------------------+

| 1657852401006149635 | @title shoes | | color IN ('blue, 'green') |

| 1657852401006149636 | @title shoes | | color='red' |

| 1657852401006149637 | @title bag | | |

+---------------------+--------------+------+---------------------------+{

"index": "products",

"type": "doc",

"_id": "1657852401006149661",

"result": "created"

}

{

"index": "products",

"type": "doc",

"_id": "1657852401006149662",

"result": "created"

}

{

"index": "products",

"type": "doc",

"_id": "1657852401006149663",

"result": "created"

}Array(

[index] => products

[type] => doc

[_id] => 1657852401006149661

[result] => created

)

Array(

[index] => products

[type] => doc

[_id] => 1657852401006149662

[result] => created

)

Array(

[index] => products

[type] => doc

[_id] => 1657852401006149663

[result] => created

){'created': True,

'found': None,

'id': 0,

'index': 'products',

'result': 'created'}

{'created': True,

'found': None,

'id': 0,

'index': 'products',

'result': 'created'}

{'created': True,

'found': None,

'id': 0,

'index': 'products',

'result': 'created'}"_index":"products","_id":0,"created":true,"result":"created"}

{"_index":"products","_id":0,"created":true,"result":"created"}

{"_index":"products","_id":0,"created":true,"result":"created"}{total=0, error=, warning=}

class SuccessResponse {

index: products

id: 0

created: true

result: created

found: null

}

class SuccessResponse {

index: products

id: 0

created: true

result: created

found: null

}

class SuccessResponse {

index: products

id: 0

created: true

result: created

found: null

}The first document doesn't match any rules. It could match the first 2, but they require additional filters.

The second document matches one rule. Note that CALL PQ by default expects a document to be a JSON, but if you do 0 as docs_json you can pass a plain string instead.

- SQL

- JSON

- PHP

- Python

- javascript

- Java

CALL PQ('products', 'Beautiful shoes', 0 as docs_json);

CALL PQ('products', 'What a nice bag', 0 as docs_json);

CALL PQ('products', '{"title": "What a nice bag"}');POST /pq/products/_search

{

"query": {

"percolate": {

"document": {

"title": "What a nice bag"

}

}

}

}$percolate = [

'index' => 'products',

'body' => [

'query' => [

'percolate' => [

'document' => [

'title' => 'What a nice bag'

]

]

]

]

];

$client->pq()->search($percolate);searchApi.percolate('products',{"query":{"percolate":{"document":{"title":"What a nice bag"}}}})res = await searchApi.percolate('products',{"query":{"percolate":{"document":{"title":"What a nice bag"}}}});PercolateRequest percolateRequest = new PercolateRequest();

query = new HashMap<String,Object>(){{

put("percolate",new HashMap<String,Object >(){{

put("document", new HashMap<String,Object >(){{

put("title","what a nice bag");

}});

}});

}};

percolateRequest.query(query);

searchApi.percolate("test_pq",percolateRequest);+---------------------+

| id |

+---------------------+

| 1657852401006149637 |

+---------------------+

+---------------------+

| id |

+---------------------+

| 1657852401006149637 |

+---------------------+{

"took": 0,

"timed_out": false,

"hits": {

"total": 1,

"max_score": 1,

"hits": [

{

"_index": "products",

"_type": "doc",

"_id": "1657852401006149644",

"_score": "1",

"_source": {

"query": {

"ql": "@title bag"

}

},

"fields": {

"_percolator_document_slot": [

1

]

}

}

]

}

}Array

(

[took] => 0

[timed_out] =>

[hits] => Array

(

[total] => 1

[max_score] => 1

[hits] => Array

(

[0] => Array

(

[_index] => products

[_type] => doc

[_id] => 1657852401006149644

[_score] => 1

[_source] => Array

(

[query] => Array

(

[match] => Array

(

[title] => bag

)

)

)

[fields] => Array

(

[_percolator_document_slot] => Array

(

[0] => 1

)

)

)

)

)

){'hits': {'hits': [{u'_id': u'2811025403043381480',

u'_index': u'products',

u'_score': u'1',

u'_source': {u'query': {u'ql': u'@title bag'}},

u'_type': u'doc',

u'fields': {u'_percolator_document_slot': [1]}}],

'total': 1},

'profile': None,

'timed_out': False,

'took': 0}{

"took": 0,

"timed_out": false,

"hits": {

"total": 1,

"hits": [

{

"_index": "products",

"_type": "doc",

"_id": "2811045522851233808",

"_score": "1",

"_source": {

"query": {

"ql": "@title bag"

}

},

"fields": {

"_percolator_document_slot": [

1

]

}

}

]

}

}class SearchResponse {

took: 0

timedOut: false

hits: class SearchResponseHits {

total: 1

maxScore: 1

hits: [{_index=products, _type=doc, _id=2811045522851234109, _score=1, _source={query={ql=@title bag}}, fields={_percolator_document_slot=[1]}}]

aggregations: null

}

profile: null

}- SQL

- JSON

- PHP

- Python

- javascript

- Java

CALL PQ('products', '{"title": "What a nice bag"}', 1 as query);POST /pq/products/_search

{

"query": {

"percolate": {

"document": {

"title": "What a nice bag"

}

}

}

}$percolate = [

'index' => 'products',

'body' => [

'query' => [

'percolate' => [

'document' => [

'title' => 'What a nice bag'

]

]

]

]

];

$client->pq()->search($percolate);searchApi.percolate('products',{"query":{"percolate":{"document":{"title":"What a nice bag"}}}})res = await searchApi.percolate('products',{"query":{"percolate":{"document":{"title":"What a nice bag"}}}});PercolateRequest percolateRequest = new PercolateRequest();

query = new HashMap<String,Object>(){{

put("percolate",new HashMap<String,Object >(){{

put("document", new HashMap<String,Object >(){{

put("title","what a nice bag");

}});

}});

}};

percolateRequest.query(query);

searchApi.percolate("test_pq",percolateRequest);+---------------------+------------+------+---------+

| id | query | tags | filters |

+---------------------+------------+------+---------+

| 1657852401006149637 | @title bag | | |

+---------------------+------------+------+---------+{

"took": 0,

"timed_out": false,

"hits": {

"total": 1,

"max_score": 1,

"hits": [

{

"_index": "products",

"_type": "doc",

"_id": "1657852401006149644",

"_score": "1",

"_source": {

"query": {

"ql": "@title bag"

}

},

"fields": {

"_percolator_document_slot": [

1

]

}

}

]

}

}Array

(

[took] => 0

[timed_out] =>

[hits] => Array

(

[total] => 1

[max_score] => 1

[hits] => Array

(

[0] => Array

(

[_index] => products

[_type] => doc

[_id] => 1657852401006149644

[_score] => 1

[_source] => Array

(

[query] => Array

(

[match] => Array

(

[title] => bag

)

)

)

[fields] => Array

(

[_percolator_document_slot] => Array

(

[0] => 1

)

)

)

)

)

){'hits': {'hits': [{u'_id': u'2811025403043381480',

u'_index': u'products',

u'_score': u'1',

u'_source': {u'query': {u'ql': u'@title bag'}},

u'_type': u'doc',

u'fields': {u'_percolator_document_slot': [1]}}],

'total': 1},

'profile': None,

'timed_out': False,

'took': 0}{

"took": 0,

"timed_out": false,

"hits": {

"total": 1,

"hits": [

{

"_index": "products",

"_type": "doc",

"_id": "2811045522851233808",

"_score": "1",

"_source": {

"query": {

"ql": "@title bag"

}

},

"fields": {

"_percolator_document_slot": [

1

]

}

}

]

}

}class SearchResponse {

took: 0

timedOut: false

hits: class SearchResponseHits {

total: 1

maxScore: 1

hits: [{_index=products, _type=doc, _id=2811045522851234109, _score=1, _source={query={ql=@title bag}}, fields={_percolator_document_slot=[1]}}]

aggregations: null

}

profile: null

}Note that via CALL PQ you can provide multiple documents different ways:

- as an array of plain document in round brackets

('doc1', 'doc2'). This requires0 as docs_json - as a array of JSONs in round brackets

('{doc1}' '{doc2}') - or as a standard JSON array

'[{doc1}, {doc2}]'

- SQL

- JSON

- PHP

- Python

- javascript

- Java

CALL PQ('products', ('nice pair of shoes', 'beautiful bag'), 1 as query, 0 as docs_json);

CALL PQ('products', ('{"title": "nice pair of shoes", "color": "red"}', '{"title": "beautiful bag"}'), 1 as query);

CALL PQ('products', '[{"title": "nice pair of shoes", "color": "blue"}, {"title": "beautiful bag"}]', 1 as query);POST /pq/products/_search

{

"query": {

"percolate": {

"documents": [

{"title": "nice pair of shoes", "color": "blue"},

{"title": "beautiful bag"}

]

}

}

}$percolate = [

'index' => 'products',

'body' => [

'query' => [

'percolate' => [

'documents' => [

['title' => 'nice pair of shoes','color'=>'blue'],

['title' => 'beautiful bag']

]

]

]

]

];

$client->pq()->search($percolate);searchApi.percolate('products',{"query":{"percolate":{"documents":[{"title":"nice pair of shoes","color":"blue"},{"title":"beautiful bag"}]}}})res = await searchApi.percolate('products',{"query":{"percolate":{"documents":[{"title":"nice pair of shoes","color":"blue"},{"title":"beautiful bag"}]}}});percolateRequest = new PercolateRequest();

query = new HashMap<String,Object>(){{

put("percolate",new HashMap<String,Object >(){{

put("documents", new ArrayList<Object>(){{

add(new HashMap<String,Object >(){{

put("title","nice pair of shoes");

put("color","blue");

}});

add(new HashMap<String,Object >(){{

put("title","beautiful bag");

}});

}});

}});

}};

percolateRequest.query(query);

searchApi.percolate("products",percolateRequest);+---------------------+------------+------+---------+

| id | query | tags | filters |

+---------------------+------------+------+---------+

| 1657852401006149637 | @title bag | | |

+---------------------+------------+------+---------+

+---------------------+--------------+------+-------------+

| id | query | tags | filters |

+---------------------+--------------+------+-------------+

| 1657852401006149636 | @title shoes | | color='red' |

| 1657852401006149637 | @title bag | | |

+---------------------+--------------+------+-------------+

+---------------------+--------------+------+---------------------------+

| id | query | tags | filters |

+---------------------+--------------+------+---------------------------+

| 1657852401006149635 | @title shoes | | color IN ('blue, 'green') |

| 1657852401006149637 | @title bag | | |

+---------------------+--------------+------+---------------------------+{

"took": 0,

"timed_out": false,

"hits": {

"total": 2,

"max_score": 1,

"hits": [

{

"_index": "products",

"_type": "doc",

"_id": "1657852401006149644",

"_score": "1",

"_source": {

"query": {

"ql": "@title bag"

}

},

"fields": {

"_percolator_document_slot": [

2

]

}

},

{

"_index": "products",

"_type": "doc",

"_id": "1657852401006149646",

"_score": "1",

"_source": {

"query": {

"ql": "@title shoes"

}

},

"fields": {

"_percolator_document_slot": [

1

]

}

}

]

}

}Array

(

[took] => 23

[timed_out] =>

[hits] => Array

(

[total] => 2

[max_score] => 1

[hits] => Array

(

[0] => Array

(

[_index] => products

[_type] => doc

[_id] => 2810781492890828819

[_score] => 1

[_source] => Array

(

[query] => Array

(

[match] => Array

(

[title] => bag

)

)

)

[fields] => Array

(

[_percolator_document_slot] => Array

(

[0] => 2

)

)

)

[1] => Array

(

[_index] => products

[_type] => doc

[_id] => 2810781492890828821

[_score] => 1

[_source] => Array

(

[query] => Array

(

[match] => Array

(

[title] => shoes

)

)

)

[fields] => Array

(

[_percolator_document_slot] => Array

(

[0] => 1

)

)

)

)

)

){'hits': {'hits': [{u'_id': u'2811025403043381494',

u'_index': u'products',

u'_score': u'1',

u'_source': {u'query': {u'ql': u'@title bag'}},

u'_type': u'doc',

u'fields': {u'_percolator_document_slot': [2]}},

{u'_id': u'2811025403043381496',

u'_index': u'products',

u'_score': u'1',

u'_source': {u'query': {u'ql': u'@title shoes'}},

u'_type': u'doc',

u'fields': {u'_percolator_document_slot': [1]}}],

'total': 2},

'profile': None,

'timed_out': False,

'took': 0}{

"took": 6,

"timed_out": false,

"hits": {

"total": 2,

"hits": [

{

"_index": "products",

"_type": "doc",

"_id": "2811045522851233808",

"_score": "1",

"_source": {

"query": {

"ql": "@title bag"

}

},

"fields": {

"_percolator_document_slot": [

2

]

}

},

{

"_index": "products",

"_type": "doc",

"_id": "2811045522851233810",

"_score": "1",

"_source": {

"query": {

"ql": "@title shoes"

}

},

"fields": {

"_percolator_document_slot": [

1

]

}

}

]

}

}class SearchResponse {

took: 0

timedOut: false

hits: class SearchResponseHits {

total: 2

maxScore: 1

hits: [{_index=products, _type=doc, _id=2811045522851234133, _score=1, _source={query={ql=@title bag}}, fields={_percolator_document_slot=[2]}}, {_index=products, _type=doc, _id=2811045522851234135, _score=1, _source={query={ql=@title shoes}}, fields={_percolator_document_slot=[1]}}]

aggregations: null

}

profile: null

}- SQL

- JSON

- PHP

- Python

- javascript

- Java

CALL PQ('products', '[{"title": "nice pair of shoes", "color": "blue"}, {"title": "beautiful bag"}]', 1 as query, 1 as docs);POST /pq/products/_search

{

"query": {

"percolate": {

"documents": [

{"title": "nice pair of shoes", "color": "blue"},

{"title": "beautiful bag"}

]

}

}

}$percolate = [

'index' => 'products',

'body' => [

'query' => [

'percolate' => [

'documents' => [

['title' => 'nice pair of shoes','color'=>'blue'],

['title' => 'beautiful bag']

]

]

]

]

];

$client->pq()->search($percolate);searchApi.percolate('products',{"query":{"percolate":{"documents":[{"title":"nice pair of shoes","color":"blue"},{"title":"beautiful bag"}]}}})res = await searchApi.percolate('products',{"query":{"percolate":{"documents":[{"title":"nice pair of shoes","color":"blue"},{"title":"beautiful bag"}]}}});percolateRequest = new PercolateRequest();

query = new HashMap<String,Object>(){{

put("percolate",new HashMap<String,Object >(){{

put("documents", new ArrayList<Object>(){{

add(new HashMap<String,Object >(){{

put("title","nice pair of shoes");

put("color","blue");

}});

add(new HashMap<String,Object >(){{

put("title","beautiful bag");

}});

}});

}});

}};

percolateRequest.query(query);

searchApi.percolate("products",percolateRequest);+---------------------+-----------+--------------+------+---------------------------+

| id | documents | query | tags | filters |

+---------------------+-----------+--------------+------+---------------------------+

| 1657852401006149635 | 1 | @title shoes | | color IN ('blue, 'green') |

| 1657852401006149637 | 2 | @title bag | | |

+---------------------+-----------+--------------+------+---------------------------+{

"took": 0,

"timed_out": false,

"hits": {

"total": 2,

"max_score": 1,

"hits": [

{

"_index": "products",

"_type": "doc",

"_id": "1657852401006149644",

"_score": "1",

"_source": {

"query": {

"ql": "@title bag"

}

},

"fields": {

"_percolator_document_slot": [

2

]

}

},

{

"_index": "products",

"_type": "doc",

"_id": "1657852401006149646",

"_score": "1",

"_source": {

"query": {

"ql": "@title shoes"

}

},

"fields": {

"_percolator_document_slot": [

1

]

}

}

]

}

}Array

(

[took] => 23

[timed_out] =>

[hits] => Array

(

[total] => 2

[max_score] => 1

[hits] => Array

(

[0] => Array

(

[_index] => products

[_type] => doc

[_id] => 2810781492890828819

[_score] => 1

[_source] => Array

(

[query] => Array

(

[match] => Array

(

[title] => bag

)

)

)

[fields] => Array

(

[_percolator_document_slot] => Array

(

[0] => 2

)

)

)

[1] => Array

(

[_index] => products

[_type] => doc

[_id] => 2810781492890828821

[_score] => 1

[_source] => Array

(

[query] => Array

(

[match] => Array

(

[title] => shoes

)

)

)

[fields] => Array

(

[_percolator_document_slot] => Array

(

[0] => 1

)

)

)

)

)

){'hits': {'hits': [{u'_id': u'2811025403043381494',

u'_index': u'products',

u'_score': u'1',

u'_source': {u'query': {u'ql': u'@title bag'}},

u'_type': u'doc',

u'fields': {u'_percolator_document_slot': [2]}},

{u'_id': u'2811025403043381496',

u'_index': u'products',

u'_score': u'1',

u'_source': {u'query': {u'ql': u'@title shoes'}},

u'_type': u'doc',

u'fields': {u'_percolator_document_slot': [1]}}],

'total': 2},

'profile': None,

'timed_out': False,

'took': 0}{

"took": 6,

"timed_out": false,

"hits": {

"total": 2,

"hits": [

{

"_index": "products",

"_type": "doc",

"_id": "2811045522851233808",

"_score": "1",

"_source": {

"query": {

"ql": "@title bag"

}

},

"fields": {

"_percolator_document_slot": [

2

]

}

},

{

"_index": "products",

"_type": "doc",

"_id": "2811045522851233810",

"_score": "1",

"_source": {

"query": {

"ql": "@title shoes"

}

},

"fields": {

"_percolator_document_slot": [

1

]

}

}

]

}

}class SearchResponse {

took: 0

timedOut: false

hits: class SearchResponseHits {

total: 2

maxScore: 1

hits: [{_index=products, _type=doc, _id=2811045522851234133, _score=1, _source={query={ql=@title bag}}, fields={_percolator_document_slot=[2]}}, {_index=products, _type=doc, _id=2811045522851234135, _score=1, _source={query={ql=@title shoes}}, fields={_percolator_document_slot=[1]}}]

aggregations: null

}

profile: null

}By default matching document ids correspond to their relative numbers in the list you provide. But in some cases each document already has its own id. For this case there's an option 'id field name' as docs_id for CALL PQ.

Note that if the id cannot be found by the provided field name the PQ rule will not be shown in the results.

This option is only available for CALL PQ via SQL.

- SQL

CALL PQ('products', '[{"id": 123, "title": "nice pair of shoes", "color": "blue"}, {"id": 456, "title": "beautiful bag"}]', 1 as query, 'id' as docs_id, 1 as docs);+---------------------+-----------+--------------+------+---------------------------+

| id | documents | query | tags | filters |

+---------------------+-----------+--------------+------+---------------------------+

| 1657852401006149664 | 456 | @title bag | | |

| 1657852401006149666 | 123 | @title shoes | | color IN ('blue, 'green') |

+---------------------+-----------+--------------+------+---------------------------+If you provide documents as separate JSONs there is an option for CALL PQ to skip invalid JSONs. In the example note that in the 2nd and 3rd queries the 2nd JSON is invalid. Without 1 as skip_bad_json the 2nd query fails, adding it in the 3rd query allows to avoid that. This option is not available via JSON over HTTP as the whole JSON query should be always valid when sent via the HTTP protocol.

- SQL

CALL PQ('products', ('{"title": "nice pair of shoes", "color": "blue"}', '{"title": "beautiful bag"}'));

CALL PQ('products', ('{"title": "nice pair of shoes", "color": "blue"}', '{"title": "beautiful bag}'));

CALL PQ('products', ('{"title": "nice pair of shoes", "color": "blue"}', '{"title": "beautiful bag}'), 1 as skip_bad_json);+---------------------+

| id |

+---------------------+

| 1657852401006149635 |

| 1657852401006149637 |

+---------------------+

ERROR 1064 (42000): Bad JSON objects in strings: 2

+---------------------+

| id |

+---------------------+

| 1657852401006149635 |

+---------------------+Percolate queries were made with high throughput and big data volume in mind, so there are few things how you can optimize your performance in case you are looking for lower latency and higher throughput.

There are two modes of distribution of a percolate table and how a percolate query can work against it:

- Sparsed. Default. When it is good: too many documents, PQ tables are mirrored. The batch of documents you pass will be split into parts according to the number of agents, so each of the nodes will receive and process only a part of the documents from your request. It will be beneficial when your set of documents is quite big, but the set of queries stored in the pq table is quite small. Assuming that all the hosts are mirrors Manticore will split your set of documents and distribute the chunks among the mirrors. Once the agents are done with the queries it will collect and merge all the results and return final query set as if it comes from one solid table. You can use replication to help the process.

- Sharded. When it is good: too many PQ rules, the rules are split among PQ tables. The whole documents set will be broad-casted to all tables of the distributed PQ table without any initial documents split. It is beneficial when you push relatively small set of documents, but the number of stored queries is huge. So in this case it is more appropriate to store just part of PQ rules on each node and then merge the results returned from the nodes that process one and the same set of documents against different sets of PQ rules. This mode has to be explicitly set since first of all it implies multiplication of network payload and secondly it expects tables with different PQ which replication cannot do out of the box.

Let's assume you have table pq_d2 which is defined as:

table pq_d2

{

type = distributed

agent = 127.0.0.1:6712:pq

agent = 127.0.0.1:6712:ptitle

}Each of 'pq' and 'ptitle' contains:

- SQL

- JSON

- PHP

- Python

- javascript

- Java

SELECT * FROM pq;POST /pq/pq/_search$params = [

'index' => 'pq',

'body' => [

]

];

$response = $client->pq()->search($params);searchApi.search({"index":"pq","query":{"match_all":{}}})res = await searchApi.search({"index":"pq","query":{"match_all":{}}});Map<String,Object> query = new HashMap<String,Object>();

query.put("match_all",null);

SearchRequest searchRequest = new SearchRequest();

searchRequest.setIndex("pq");

searchRequest.setQuery(query);

SearchResponse searchResponse = searchApi.search(searchRequest);+------+-------------+------+-------------------+

| id | query | tags | filters |

+------+-------------+------+-------------------+

| 1 | filter test | | gid>=10 |

| 2 | angry | | gid>=10 OR gid<=3 |

+------+-------------+------+-------------------+

2 rows in set (0.01 sec){

"took":0,

"timed_out":false,

"hits":{

"total":2,

"hits":[

{

"_id":"1",

"_score":1,

"_source":{

"query":{ "ql":"filter test" },

"tags":"",

"filters":"gid>=10"

}

},

{

"_id":"2",

"_score":1,

"_source":{

"query":{"ql":"angry"},

"tags":"",

"filters":"gid>=10 OR gid<=3"

}

}

]

}

}(

[took] => 0

[timed_out] =>

[hits] =>

(

[total] => 2

[hits] =>

(

[0] =>

(

[_id] => 1

[_score] => 1

[_source] =>

(

[query] =>

(

[ql] => filter test

)

[tags] =>

[filters] => gid>=10

)

),

[1] =>

(

[_id] => 1

[_score] => 1

[_source] =>

(

[query] =>

(

[ql] => angry

)

[tags] =>

[filters] => gid>=10 OR gid<=3

)

)

)

)

){'hits': {'hits': [{u'_id': u'2811025403043381501',

u'_score': 1,

u'_source': {u'filters': u"gid>=10",

u'query': u'filter test',

u'tags': u''}},

{u'_id': u'2811025403043381502',

u'_score': 1,

u'_source': {u'filters': u"gid>=10 OR gid<=3",

u'query': u'angry',

u'tags': u''}}],

'total': 2},

'profile': None,

'timed_out': False,

'took': 0}{"hits": {"hits": [{"_id": "2811025403043381501",

"_score": 1,

"_source": {"filters": u"gid>=10",

"query": "filter test",

"tags": ""}},

{"_id": "2811025403043381502",

"_score": 1,

"_source": {"filters": u"gid>=10 OR gid<=3",

"query": "angry",

"tags": ""}}],

"total": 2},

"timed_out": false,

"took": 0}class SearchResponse {

took: 0

timedOut: false

hits: class SearchResponseHits {

total: 2

maxScore: null

hits: [{_id=2811045522851233962, _score=1, _source={filters=gid>=10, query=filter test, tags=}}, {_id=2811045522851233951, _score=1, _source={filters=gid>=10 OR gid<=3, query=angry,tags=}}]

aggregations: null

}

profile: null

}And you fire CALL PQ to the distributed table with a couple of docs.

- SQL

- JSON

- PHP

- Python

- javascript

- Java

CALL PQ ('pq_d2', ('{"title":"angry test", "gid":3 }', '{"title":"filter test doc2", "gid":13}'), 1 AS docs);POST /pq/pq/_search -d '

"query":

{

"percolate":

{

"documents" : [

{ "title": "angry test", "gid": 3 },

{ "title": "filter test doc2", "gid": 13 }

]

}

}

'$params = [

'index' => 'pq',

'body' => [

'query' => [

'percolate' => [

'documents' => [

[

'title'=>'angry test',

'gid' => 3

],

[

'title'=>'filter test doc2',

'gid' => 13

],

]

]

]

]

];

$response = $client->pq()->search($params);searchApi.percolate('pq',{"percolate":{"documents":[{"title":"angry test","gid":3},{"title":"filter test doc2","gid":13}]}})res = await searchApi.percolate('pq',{"percolate":{"documents":[{"title":"angry test","gid":3},{"title":"filter test doc2","gid":13}]}});percolateRequest = new PercolateRequest();

query = new HashMap<String,Object>(){{

put("percolate",new HashMap<String,Object >(){{

put("documents", new ArrayList<Object>(){{

add(new HashMap<String,Object >(){{

put("title","angry test");

put("gid",3);

}});

add(new HashMap<String,Object >(){{

put("title","filter test doc2");

put("gid",13);

}});

}});

}});

}};

percolateRequest.query(query);

searchApi.percolate("pq",percolateRequest);+------+-----------+

| id | documents |

+------+-----------+

| 1 | 2 |

| 2 | 1 |

+------+-----------+{

"took":0,

"timed_out":false,

"hits":{

"total":2,"hits":[

{

"_id":"2",

"_score":1,

"_source":{

"query":{"title":"angry"},

"tags":"",

"filters":"gid>=10 OR gid<=3"

}

}

{

"_id":"1",

"_score":1,

"_source":{

"query":{"ql":"filter test"},

"tags":"",

"filters":"gid>=10"

}

},

]

}

}(

[took] => 0

[timed_out] =>

[hits] =>

(

[total] => 2

[hits] =>

(

[0] =>

(

[_index] => pq

[_type] => doc

[_id] => 2

[_score] => 1

[_source] =>

(

[query] =>

(

[ql] => angry

)

[tags] =>

[filters] => gid>=10 OR gid<=3

),

[fields] =>

(

[_percolator_document_slot] =>

(

[0] => 1

)

)

),

[1] =>

(

[_index] => pq

[_id] => 1

[_score] => 1

[_source] =>

(

[query] =>

(

[ql] => filter test

)

[tags] =>

[filters] => gid>=10

)

[fields] =>

(

[_percolator_document_slot] =>

(

[0] => 0

)

)

)

)

)

){'hits': {'hits': [{u'_id': u'2811025403043381480',

u'_index': u'pq',

u'_score': u'1',

u'_source': {u'query': {u'ql': u'angry'},u'tags':u'',u'filters':u"gid>=10 OR gid<=3"},

u'_type': u'doc',

u'fields': {u'_percolator_document_slot': [1]}},

{u'_id': u'2811025403043381501',

u'_index': u'pq',

u'_score': u'1',

u'_source': {u'query': {u'ql': u'filter test'},u'tags':u'',u'filters':u"gid>=10"},

u'_type': u'doc',

u'fields': {u'_percolator_document_slot': [1]}}],

'total': 2},

'profile': None,

'timed_out': False,

'took': 0}{'hits': {'hits': [{u'_id': u'2811025403043381480',

u'_index': u'pq',

u'_score': u'1',

u'_source': {u'query': {u'ql': u'angry'},u'tags':u'',u'filters':u"gid>=10 OR gid<=3"},

u'_type': u'doc',

u'fields': {u'_percolator_document_slot': [1]}},

{u'_id': u'2811025403043381501',

u'_index': u'pq',

u'_score': u'1',

u'_source': {u'query': {u'ql': u'filter test'},u'tags':u'',u'filters':u"gid>=10"},

u'_type': u'doc',

u'fields': {u'_percolator_document_slot': [1]}}],

'total': 2},

'profile': None,

'timed_out': False,

'took': 0}class SearchResponse {

took: 10

timedOut: false

hits: class SearchResponseHits {

total: 2

maxScore: 1

hits: [{_index=pq, _type=doc, _id=2811045522851234165, _score=1, _source={query={ql=@title angry}}, fields={_percolator_document_slot=[1]}}, {_index=pq, _type=doc, _id=2811045522851234166, _score=1, _source={query={ql=@title filter test doc2}}, fields={_percolator_document_slot=[2]}}]

aggregations: null

}

profile: null

}That was an example of the default sparsed mode. To demonstrate the sharded mode let's create a distributed PQ table consisting of 2 local PQ tables and add 2 documents to "products1" and 1 document to "products2":

create table products1(title text, color string) type='pq';

create table products2(title text, color string) type='pq';

create table products_distributed type='distributed' local='products1' local='products2';

INSERT INTO products1(query) values('@title bag');

INSERT INTO products1(query,filters) values('@title shoes', 'color=\'red\'');

INSERT INTO products2(query,filters) values('@title shoes', 'color in (\'blue\', \'green\')');Now if you add 'sharded' as mode to CALL PQ it will send the documents to all the agents tables (in this case just local tables, but they can be remote to utilize external hardware). This mode is not available via the JSON interface.

- SQL

CALL PQ('products_distributed', ('{"title": "nice pair of shoes", "color": "blue"}', '{"title": "beautiful bag"}'), 'sharded' as mode, 1 as query);+---------------------+--------------+------+---------------------------+

| id | query | tags | filters |

+---------------------+--------------+------+---------------------------+

| 1657852401006149639 | @title bag | | |

| 1657852401006149643 | @title shoes | | color IN ('blue, 'green') |

+---------------------+--------------+------+---------------------------+Note that the syntax of agent mirrors in the configuration (when several hosts are assigned to one agent line, separated with | ) has nothing to do with the CALL PQ query mode, so each agent always represents one node despite of the number of HA mirrors specified for this agent.

In some case you might want to get more details about performance a percolate query. For that purposes there is option 1 as verbose which is available only via SQL and allows to save more performance metrics. You can see them via SHOW META query which you can run after CALL PQ. See SHOW META for more info.

- 1 as verbose

- 0 as verbose

CALL PQ('products', ('{"title": "nice pair of shoes", "color": "blue"}', '{"title": "beautiful bag"}'), 1 as verbose); show meta;CALL PQ('products', ('{"title": "nice pair of shoes", "color": "blue"}', '{"title": "beautiful bag"}'), 0 as verbose); show meta;+---------------------+

| id |

+---------------------+

| 1657852401006149644 |

| 1657852401006149646 |

+---------------------+

+-------------------------+-----------+

| Name | Value |

+-------------------------+-----------+

| Total | 0.000 sec |

| Setup | 0.000 sec |

| Queries matched | 2 |

| Queries failed | 0 |

| Document matched | 2 |

| Total queries stored | 3 |

| Term only queries | 3 |

| Fast rejected queries | 0 |

| Time per query | 27, 10 |

| Time of matched queries | 37 |

+-------------------------+-----------++---------------------+

| id |

+---------------------+

| 1657852401006149644 |

| 1657852401006149646 |

+---------------------+

+-----------------------+-----------+

| Name | Value |

+-----------------------+-----------+

| Total | 0.000 sec |

| Queries matched | 2 |

| Queries failed | 0 |

| Document matched | 2 |

| Total queries stored | 3 |

| Term only queries | 3 |

| Fast rejected queries | 0 |

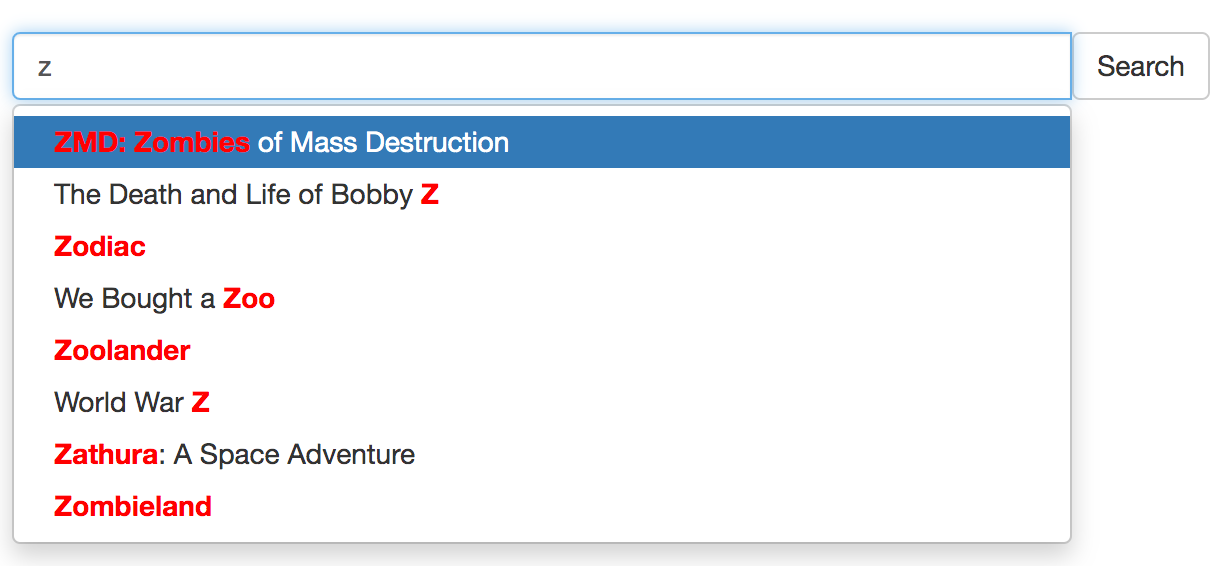

+-----------------------+-----------+Autocomplete (or word completion) is a feature in which an application predicts the rest of a word a user is typing. On websites it's used in search boxes, where a user starts to type a word and a dropdown with suggestions pops up so the user can select the ending from the list.

There are few ways how you can do autocomplete in Manticore:

To autocomplete a sentence you can use infixed search. You can find endings of a document's field by providing its beginning and:

- using full-text operators

*to match anything it substitutes - and optionally

^to start from the beginning of the field - and perhaps

""for phrase matching - and optionally highlight the results so you don't have to fetch them in full to your application

There is an article about it in our blog and an interactive course. A quick example is:

- Let's assume you have a document:

My cat loves my dog. The cat (Felis catus) is a domestic species of small carnivorous mammal. - Then you can use

^,""and*so as the user is typing you make queries like:^"m*",^"my *",^"my c*",^"my ca*"and so on - It will find the document and if you also do highlighting you will get something like:

<b>My cat</b> loves my dog. The cat ( ...

In some cases all you need is just autocomplete a single word or a couple of words. In this case you can use CALL KEYWORDS.

CALL KEYWORDS is available via the SQL interface and provides a way to check how keywords are tokenized or to retrieve the tokenized forms of particular keywords. If the table enables infixes it allows to quickly find possible endings for given keywords which makes it possible for use for autocomplete.

This is a good alternative to just general infixed search as it provides higher performance since all it needs for work is table's dictionary, not the documents themselves.

CALL KEYWORDS(text, table [, options])CALL KEYWORDS statement splits text into keywords. It returns tokenized and normalized forms of the keywords, and, optionally, keyword statistics. It also returns the position of each keyword in the query and all forms of tokenized keywords in case the table enables lemmatizers.

| Parameter | Description |

|---|---|

| text | Text to break down to keywords |

| table | Name of the table from which to take the text processing settings |

| 0/1 as stats | Show statistics of keywords, default is 0 |

| 0/1 as fold_wildcards | Fold wildcards, default is 0 |

| 0/1 as fold_lemmas | Fold morphological lemmas, default is 0 |

| 0/1 as fold_blended | Fold blended words, default is 0 |

| N as expansion_limit | Override expansion_limit defined in the server configuration, default is 0 (use value from the configuration) |

| docs/hits as sort_mode | Sort output results by either 'docs' or 'hits'. Default no sorting |

The examples show how it works if assuming the user is trying to get an autocomplete for "my cat ...". So on the application side all you need to do is to suggest the user the endings from the column "normalized" for each new word. It often makes sense to sort by hits or docs using 'hits' as sort_mode or 'docs' as sort_mode.

- Examples

MySQL [(none)]> CALL KEYWORDS('m*', 't', 1 as stats);

+------+-----------+------------+------+------+

| qpos | tokenized | normalized | docs | hits |

+------+-----------+------------+------+------+

| 1 | m* | my | 1 | 2 |

| 1 | m* | mammal | 1 | 1 |

+------+-----------+------------+------+------+

MySQL [(none)]> CALL KEYWORDS('my*', 't', 1 as stats);

+------+-----------+------------+------+------+

| qpos | tokenized | normalized | docs | hits |

+------+-----------+------------+------+------+

| 1 | my* | my | 1 | 2 |

+------+-----------+------------+------+------+

MySQL [(none)]> CALL KEYWORDS('c*', 't', 1 as stats, 'hits' as sort_mode);

+------+-----------+-------------+------+------+

| qpos | tokenized | normalized | docs | hits |

+------+-----------+-------------+------+------+

| 1 | c* | cat | 1 | 2 |

| 1 | c* | carnivorous | 1 | 1 |

| 1 | c* | catus | 1 | 1 |

+------+-----------+-------------+------+------+

MySQL [(none)]> CALL KEYWORDS('ca*', 't', 1 as stats, 'hits' as sort_mode);

+------+-----------+-------------+------+------+

| qpos | tokenized | normalized | docs | hits |

+------+-----------+-------------+------+------+

| 1 | ca* | cat | 1 | 2 |

| 1 | ca* | carnivorous | 1 | 1 |

| 1 | ca* | catus | 1 | 1 |

+------+-----------+-------------+------+------+

MySQL [(none)]> CALL KEYWORDS('cat*', 't', 1 as stats, 'hits' as sort_mode);

+------+-----------+------------+------+------+

| qpos | tokenized | normalized | docs | hits |

+------+-----------+------------+------+------+

| 1 | cat* | cat | 1 | 2 |

| 1 | cat* | catus | 1 | 1 |

+------+-----------+------------+------+------+There is a nice trick how you can improve the above algorithm - use bigram_index. When you have it enabled for the table what you get in it is not just a single word, but each pair of words standing one after another indexed as a separate token.

This allows to predict not just the current word's ending, but the next word too which is especially beneficial for the purpose of autocomplete.

- Examples

MySQL [(none)]> CALL KEYWORDS('m*', 't', 1 as stats, 'hits' as sort_mode);

+------+-----------+------------+------+------+

| qpos | tokenized | normalized | docs | hits |

+------+-----------+------------+------+------+

| 1 | m* | my | 1 | 2 |

| 1 | m* | mammal | 1 | 1 |

| 1 | m* | my cat | 1 | 1 |

| 1 | m* | my dog | 1 | 1 |

+------+-----------+------------+------+------+

MySQL [(none)]> CALL KEYWORDS('my*', 't', 1 as stats, 'hits' as sort_mode);

+------+-----------+------------+------+------+

| qpos | tokenized | normalized | docs | hits |

+------+-----------+------------+------+------+

| 1 | my* | my | 1 | 2 |

| 1 | my* | my cat | 1 | 1 |

| 1 | my* | my dog | 1 | 1 |

+------+-----------+------------+------+------+

MySQL [(none)]> CALL KEYWORDS('c*', 't', 1 as stats, 'hits' as sort_mode);

+------+-----------+--------------------+------+------+

| qpos | tokenized | normalized | docs | hits |

+------+-----------+--------------------+------+------+

| 1 | c* | cat | 1 | 2 |

| 1 | c* | carnivorous | 1 | 1 |

| 1 | c* | carnivorous mammal | 1 | 1 |

| 1 | c* | cat felis | 1 | 1 |

| 1 | c* | cat loves | 1 | 1 |

| 1 | c* | catus | 1 | 1 |

| 1 | c* | catus is | 1 | 1 |

+------+-----------+--------------------+------+------+

MySQL [(none)]> CALL KEYWORDS('ca*', 't', 1 as stats, 'hits' as sort_mode);

+------+-----------+--------------------+------+------+

| qpos | tokenized | normalized | docs | hits |

+------+-----------+--------------------+------+------+

| 1 | ca* | cat | 1 | 2 |

| 1 | ca* | carnivorous | 1 | 1 |

| 1 | ca* | carnivorous mammal | 1 | 1 |

| 1 | ca* | cat felis | 1 | 1 |

| 1 | ca* | cat loves | 1 | 1 |

| 1 | ca* | catus | 1 | 1 |

| 1 | ca* | catus is | 1 | 1 |

+------+-----------+--------------------+------+------+

MySQL [(none)]> CALL KEYWORDS('cat*', 't', 1 as stats, 'hits' as sort_mode);

+------+-----------+------------+------+------+

| qpos | tokenized | normalized | docs | hits |

+------+-----------+------------+------+------+

| 1 | cat* | cat | 1 | 2 |

| 1 | cat* | cat felis | 1 | 1 |

| 1 | cat* | cat loves | 1 | 1 |

| 1 | cat* | catus | 1 | 1 |

| 1 | cat* | catus is | 1 | 1 |

+------+-----------+------------+------+------+CALL KEYWORDS supports distributed tables so no matter how big your data set you can benefit from using it.

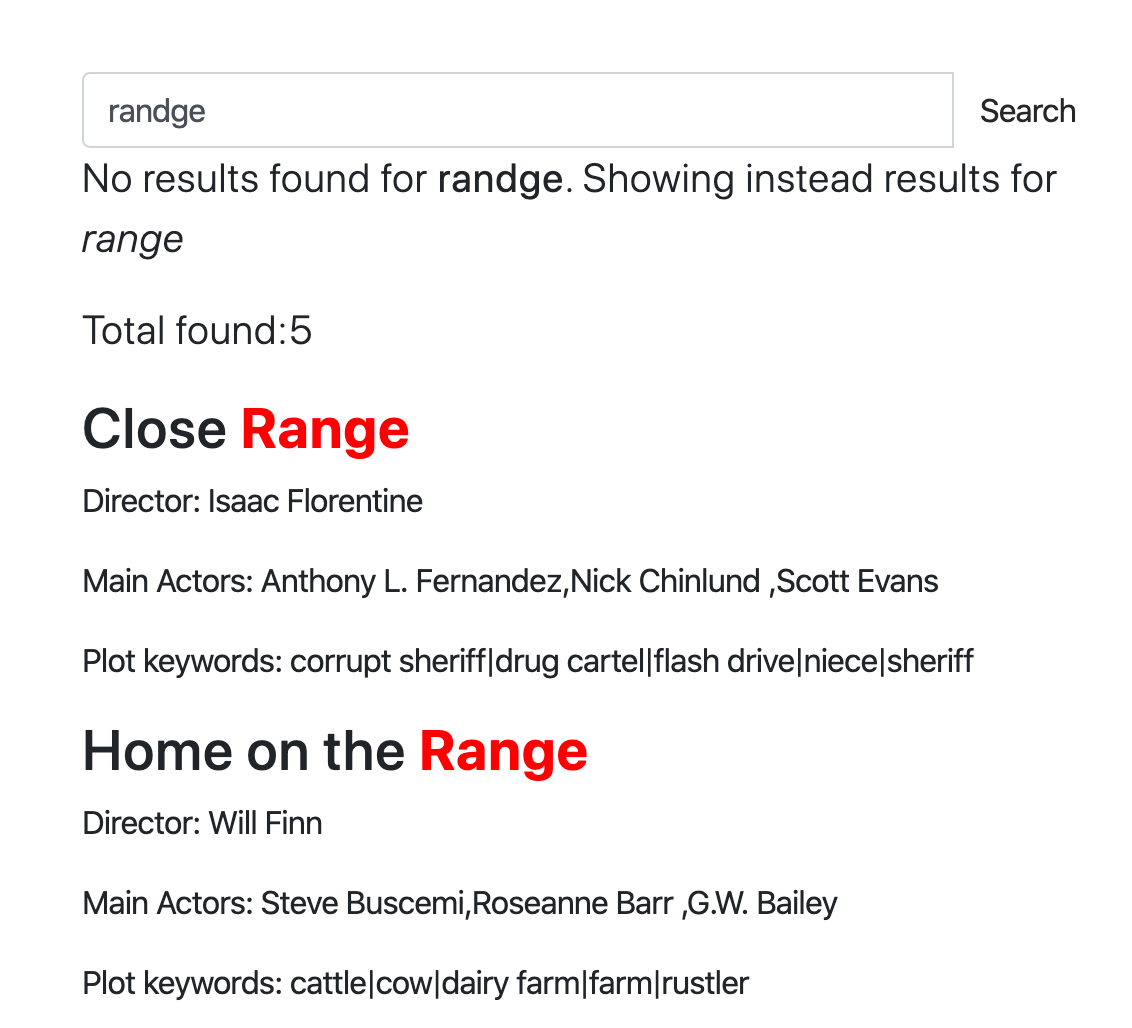

Spell correction also known as:

- Auto correction

- Text correction

- Fixing a spelling error

- Typo tolerance

- "Did you mean?"

and so on is a software functionality that suggests you alternatives to or makes automatic corrections of the text you have typed in. The concept of correcting typed text dates back to the 1960s, when a computer scientist named Warren Teitelman who also invented the "undo" command came up with a philosophy of computing called D.W.I.M., or "Do What I Mean." Rather than programming computers to accept only perfectly formatted instructions, Teitelman said we should program them to recognize obvious mistakes.

The first well known product which provided spell correction functionality was Microsoft Word 6.0 released in 1993.

There are few ways how spell correction can be done, but the important thing is that there is no purely programmatic way which will convert your mistyped "ipone" into "iphone" (at least with decent quality). Mostly there has to be a data set the system is based on. The data set can be:

- A dictionary of properly spelled words. In its turn it can be:

- Based on your real data. The idea here is that mostly in the dictionary made up from your data the spelling is correct and then for each typed word the system just tries to find a word which is most similar to that (we'll speak about how exactly it can be done with Manticore shortly)

- Or can be based on an external dictionary which has nothing to do with your data. The problem which may arise here is that your data and the external dictionary can be too different: some words may be missing in the dictionary, some words may be missing in your data.

- Not just dictionary-based, but context-aware, e.g. "white ber" would be corrected to "white bear" while "dark ber" - to "dark beer". The context may be not just a neighbour word in your query, but your location, date of time, current sentence's grammar (to let's say change "there" to "their" or not), your search history and virtually any other things that can affect your intent.

- Another classical approach is to use previous search queries as the data set for spell correction. It's even more utilized in autocomplete functionality, but makes sense for autocorrect too. The idea here is that mostly users are right with spelling, therefore we can use words from their search history as a source of truth even if we don't have the words in our documents nor we use an external dictionary. Context-awareness is also possible here.

Manticore provides commands CALL QSUGGEST and CALL SUGGEST that can be used for the purpose of automatic spell correction.

They are both available via SQL only and the general syntax is:

CALL QSUGGEST(word, table [,options])

CALL SUGGEST(word, table [,options])

options: N as option_name[, M as another_option, ...]These commands provide for a given word all suggestions from the dictionary. They work only on tables with infixing enabled and dict=keywords. They return the suggested keywords, Levenshtein distance between the suggested and original keywords and the docs statistics of the suggested keyword.

If the first parameter is not a single word, but multiple, then:

CALL QSUGGESTwill return suggestions only for the last word, ignoring the restCALL SUGGESTwill return suggestions only for the first word

That's the only difference between them. Several options are supported for customization:

| Option | Description | Default |

|---|---|---|

| limit | Returns N top matches | 5 |

| max_edits | Keeps only dictionary words which Levenshtein distance is less than or equal to N | 4 |

| result_stats | Provides Levenshtein distance and document count of the found words | 1 (enabled) |

| delta_len | Keeps only dictionary words whose length difference is less than N | 3 |

| max_matches | Number of matches to keep | 25 |

| reject | Rejected words are matches that are not better than those already in the match queue. They are put in a rejected queue that gets reset in case one actually can go in the match queue. This parameter defines the size of the rejected queue (as reject*max(max_matched,limit)). If the rejected queue is filled, the engine stops looking for potential matches | 4 |

| result_line | alternate mode to display the data by returning all suggests, distances and docs each per one row | 0 |

| non_char | do not skip dictionary words with non alphabet symbols | 0 (skip such words) |

To show how it works let's create a table and add few documents into it.

create table products(title text) min_infix_len='2';

insert into products values (0,'Crossbody Bag with Tassel'), (0,'microfiber sheet set'), (0,'Pet Hair Remover Glove');As you can see we have a mistype in "crossbUdy" which gets corrected to the "crossbody". In addition to that by default CALL SUGGEST/QSUGGEST return:

distance- the Levenshtein distance which means how many edits they had to make to convert the given word to the suggestiondocs- and the number of docs that have this word

To disable these stats display you can use option 0 as result_stats.

- Example

call suggest('crossbudy', 'products');+-----------+----------+------+

| suggest | distance | docs |

+-----------+----------+------+

| crossbody | 1 | 1 |

+-----------+----------+------+If the first parameter is not a single word, but multiple, then CALL SUGGEST will return suggestions only for the first word.

- Example

call suggest('bagg with tasel', 'products');+---------+----------+------+

| suggest | distance | docs |

+---------+----------+------+

| bag | 1 | 1 |

+---------+----------+------+If the first parameter is not a single word, but multiple, then CALL SUGGEST will return suggestions only for the last word.

- Example

CALL QSUGGEST('bagg with tasel', 'products');+---------+----------+------+

| suggest | distance | docs |

+---------+----------+------+

| tassel | 1 | 1 |

+---------+----------+------+Using 1 as result_line in the options turns on alternate mode to display the data by returning all suggests, distances and docs each per one row.

- Example

CALL QSUGGEST('bagg with tasel', 'products', 1 as result_line);+----------+--------+

| name | value |

+----------+--------+

| suggests | tassel |

| distance | 1 |

| docs | 1 |

+----------+--------+This interactive course demonstrates online how it works on a web page and provides different examples.

Query cache stores a compressed result set in memory, and then reuses it for subsequent queries where possible. You can configure it using the following directives:

- qcache_max_bytes, a limit on the RAM use for cached queries storage. Defaults to 16 MB. Setting

qcache_max_bytesto 0 completely disables the query cache. - qcache_thresh_msec, the minimum wall query time to cache. Queries that completed faster than this will not be cached. Defaults to 3000 msec, or 3 seconds.

- qcache_ttl_sec, cached entry TTL, or time to live. Queries will stay cached for this much. Defaults to 60 seconds, or 1 minute.

These settings can be changed on the fly using the SET GLOBAL statement:

mysql> SET GLOBAL qcache_max_bytes=128000000;These changes are applied immediately, and the cached result sets that no longer satisfy the constraints are immediately discarded. When reducing the cache size on the fly, MRU (most recently used) result sets win.

Query cache works as follows. When it's enabled, every full-text search result gets completely stored in memory. That happens after full-text matching, filtering, and ranking, so basically we store total_found {docid,weight} pairs. Compressed matches can consume anywhere from 2 bytes to 12 bytes per match on average, mostly depending on the deltas between the subsequent docids. Once the query completes, we check the wall time and size thresholds, and either save that compressed result set for reuse, or discard it.

Note how the query cache impact on RAM is thus not limited by qcache_max_bytes! If you run, say, 10 concurrent queries, each of them matching upto 1M matches (after filters), then the peak temporary RAM use will be in the 40 MB to 240 MB range, even if in the end the queries are quick enough and do not get cached.

Queries can then use cache when the table, the full-text query (ie.MATCH() contents), and the ranker are all a match, and filters are compatible. Meaning:

- The full-text part within

MATCH()must be a bytewise match. Add a single extra space, and that is now a different query where the query cache is concerned. - The ranker (and its parameters if any, for user-defined rankers) must be a bytewise match.

- The filters must be a superset of the original filters. That is, you can add extra filters and still hit the cache. (In this case, the extra filters will be applied to the cached result.) But if you remove one, that will be a new query again.

Cache entries expire with TTL, and also get invalidated on table rotation, or on TRUNCATE, or on ATTACH. Note that at the moment entries are not invalidated on arbitrary RT table writes! So a cached query might be returning older results for the duration of its TTL.

Current cache status can be inspected with in SHOW STATUS through the qcache_XXX variables:

mysql> SHOW STATUS LIKE 'qcache%';

+-----------------------+----------+

| Counter | Value |

+-----------------------+----------+

| qcache_max_bytes | 16777216 |

| qcache_thresh_msec | 3000 |

| qcache_ttl_sec | 60 |

| qcache_cached_queries | 0 |

| qcache_used_bytes | 0 |

| qcache_hits | 0 |

+-----------------------+----------+

6 rows in set (0.00 sec)