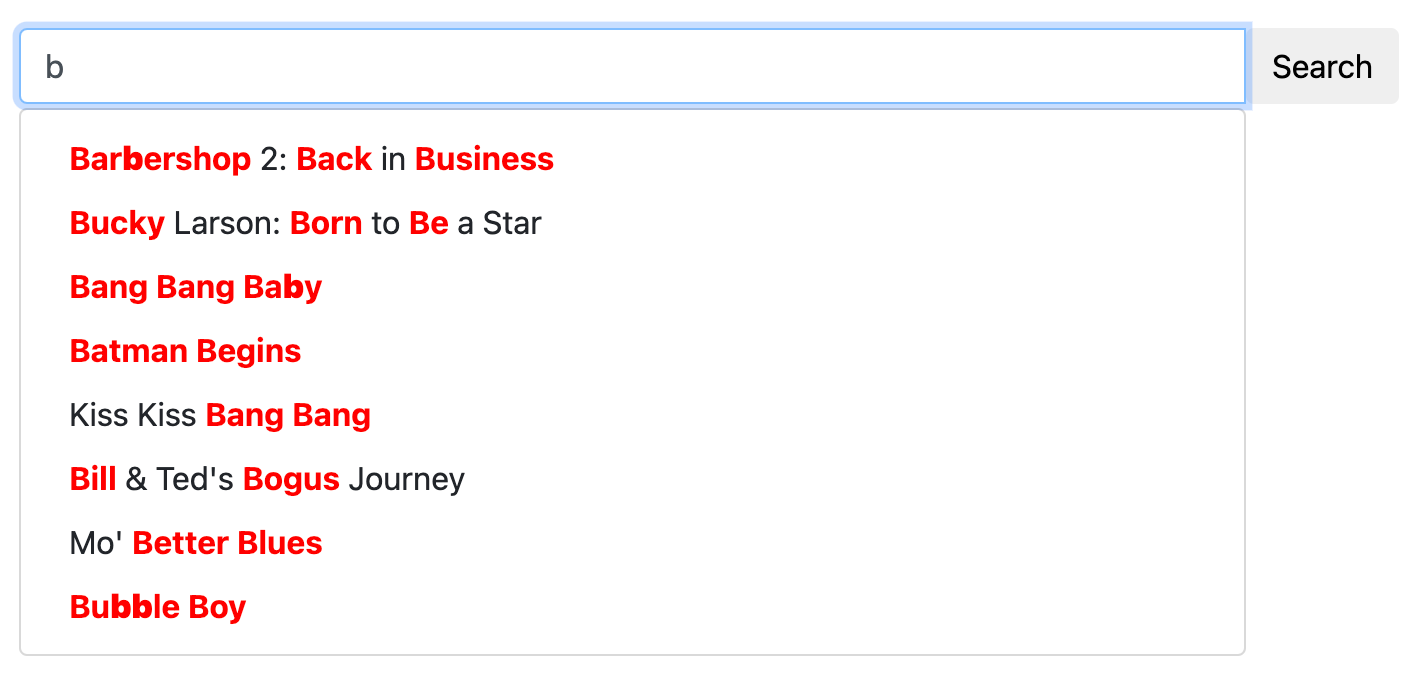

Faceted search is as crucial to a modern search application as autocomplete, spell correction, and search keywords highlighting, especially in e-commerce products.

Faceted search comes in handy when dealing with large quantities of data and various interconnected properties, such as size, color, manufacturer, or other factors. When querying vast amounts of data, search results frequently include numerous entries that don't match the user's expectations. Faceted search enables the end user to explicitly define the criteria they want their search results to satisfy.

In Manticore Search, there's an optimization that maintains the result set of the original query and reuses it for each facet calculation. Since the aggregations are applied to an already calculated subset of documents, they're fast, and the total execution time can often be only slightly longer than the initial query. Facets can be added to any query, and the facet can be any attribute or expression. A facet result includes the facet values and the facet counts. Facets can be accessed using the SQL SELECT statement by declaring them at the very end of the query.

The facet values can originate from an attribute, a JSON property within a JSON attribute, or an expression. Facet values can also be aliased, but the alias must be unique across all result sets (main query result set and other facets result sets). The facet value is derived from the aggregated attribute/expression, but it can also come from another attribute/expression.

FACET {expr_list} [BY {expr_list}] [ALL FILTERS | FILTERS {expr_list} | EXCLUDE FILTERS {expr_list}] [MODE {strict | auto | max}] [DISTINCT {field_name}] [ORDER BY {expr | FACET()} {ASC | DESC}] [LIMIT [offset,] count]Multiple facet declarations must be separated by a whitespace.

Facets can be defined in the aggs node:

"aggs" :

{

"group name" :

{

"terms" :

{

"field":"attribute name",

"size": 1000

}

"sort": [ {"attribute name": { "order":"asc" }} ]

}

}where:

group nameis an alias assigned to the aggregationfieldvalue must contain the name of the attribute or expression being faceted- optional

sizespecifies the maximum number of buckets to include in the result. When not specified, it inherits the main query's limit. More details can be found in the Size of facet result section. - optional

sortspecifies an array of attributes and/or additional properties using the same syntax as the "sort" parameter in the main query. - optional top-level

facet_filter_modecontrols how all aggregations inherit filters from the main query. Supported values arestrict,auto, andmax. - optional per-aggregation

modeoverrides the inherited mode for that aggregation. Supported values arestrict,auto, andmax.filter_modeis kept as a backward-compatible alias. - optional per-aggregation

filtersexplicitly lists which main-query attribute filters should be applied to that aggregation. - optional per-aggregation

exclude_filtersexplicitly lists which main-query attribute filters should not be applied to that aggregation. autoandmaxfacet result sets can include astatusbucket marker. Returned values areselected,available, andunavailable.

The result set will contain an aggregations node with the returned facets, where key is the aggregated value and doc_count is the aggregation count.

"aggregations": {

"group name": {

"buckets": [

{

"key": 10,

"doc_count": 1019

},

{

"key": 9,

"doc_count": 954

},

{

"key": 8,

"doc_count": 1021

},

{

"key": 7,

"doc_count": 1011

},

{

"key": 6,

"doc_count": 997

}

]

}

}- SQL

- JSON

- PHP

- Python

- Python-asyncio

- Javascript

- Java

- C#

- Rust

- TypeScript

- Go

SELECT *, price AS aprice FROM facetdemo LIMIT 10 FACET price LIMIT 10 FACET brand_id LIMIT 5;POST /search -d '

{

"table" : "facetdemo",

"query" : {"match_all" : {} },

"limit": 5,

"aggs" :

{

"group_property" :

{

"terms" :

{

"field":"price"

}

},

"group_brand_id" :

{

"terms" :

{

"field":"brand_id"

}

}

}

}

'$index->setName('facetdemo');

$search = $index->search('');

$search->limit(5);

$search->facet('price','price');

$search->facet('brand_id','group_brand_id');

$results = $search->get();res =searchApi.search({"table":"facetdemo","query":{"match_all":{}},"limit":5,"aggs":{"group_property":{"terms":{"field":"price",}},"group_brand_id":{"terms":{"field":"brand_id"}}}})res = await searchApi.search({"table":"facetdemo","query":{"match_all":{}},"limit":5,"aggs":{"group_property":{"terms":{"field":"price",}},"group_brand_id":{"terms":{"field":"brand_id"}}}})res = await searchApi.search({"table":"facetdemo","query":{"match_all":{}},"limit":5,"aggs":{"group_property":{"terms":{"field":"price",}},"group_brand_id":{"terms":{"field":"brand_id"}}}});aggs = new HashMap<String,Object>(){{

put("group_property", new HashMap<String,Object>(){{

put("terms", new HashMap<String,Object>(){{

put("field","price");

}});

}});

put("group_brand_id", new HashMap<String,Object>(){{

put("terms", new HashMap<String,Object>(){{

put("field","brand_id");

}});

}});

}};

searchRequest = new SearchRequest();

searchRequest.setIndex("facetdemo");

searchRequest.setLimit(5);

query = new HashMap<String,Object>();

query.put("match_all",null);

searchRequest.setQuery(query);

searchRequest.setAggs(aggs);

searchResponse = searchApi.search(searchRequest);var agg1 = new Aggregation("group_property", "price");

var agg2 = new Aggregation("group_brand_id", "brand_id");

object query = new { match_all=null };

var searchRequest = new SearchRequest("facetdemo", query);

searchRequest.Limit = 5;

searchRequest.Aggs = new List<Aggregation> {agg1, agg2};

var searchResponse = searchApi.Search(searchRequest);let query = SearchQuery::new();

let aggTerms1 = AggTerms::new("price");

let agg1 = Aggregation {

terms: Some(Box::new(aggTerms1)),

..Default::default(),

};

let aggTerms2 = AggTerms::new("brand_id");

let agg2 = Aggregation {

terms: Some(Box::new(aggTerms2)),

..Default::default(),

};

let mut aggs = HashMap::new();

aggs.insert("group_property".to_string(), agg1);

aggs.insert("group_brand_id".to_string(), agg2);

let search_req = SearchRequest {

table: "facetdemo".to_string(),

query: Some(Box::new(query)),

aggs: serde_json::json!(aggs),

limit: serde_json::json!(5),

..Default::default(),

};

let search_res = search_api.search(search_req).await;res = await searchApi.search({

index: 'test',

query: { match_all:{} },

aggs: {

name_group: {

terms: { field : 'name' }

},

cat_group: {

terms: { field: 'cat' }

}

}

});query := map[string]interface{} {}

searchRequest.SetQuery(query)

aggByName := manticoreclient.NewAggregation()

aggTerms := manticoreclient.NewAggregationTerms()

aggTerms.SetField("name")

aggByName.SetTerms(aggTerms)

aggByCat := manticoreclient.NewAggregation()

aggTerms.SetField("cat")

aggByCat.SetTerms(aggTerms)

aggs := map[string]Aggregation{} { "name_group": aggByName, "cat_group": aggByCat }

searchRequest.SetAggs(aggs)

res, _, _ := apiClient.SearchAPI.Search(context.Background()).SearchRequest(*searchRequest).Execute()+------+-------+----------+---------------------+------------+-------------+---------------------------------------+------------+--------+

| id | price | brand_id | title | brand_name | property | j | categories | aprice |

+------+-------+----------+---------------------+------------+-------------+---------------------------------------+------------+--------+

| 1 | 306 | 1 | Product Ten Three | Brand One | Six_Ten | {"prop1":66,"prop2":91,"prop3":"One"} | 10,11 | 306 |

| 2 | 400 | 10 | Product Three One | Brand Ten | Four_Three | {"prop1":69,"prop2":19,"prop3":"One"} | 13,14 | 400 |

...

| 9 | 560 | 6 | Product Two Five | Brand Six | Eight_Two | {"prop1":90,"prop2":84,"prop3":"One"} | 13,14 | 560 |

| 10 | 229 | 9 | Product Three Eight | Brand Nine | Seven_Three | {"prop1":84,"prop2":39,"prop3":"One"} | 12,13 | 229 |

+------+-------+----------+---------------------+------------+-------------+---------------------------------------+------------+--------+

10 rows in set (0.00 sec)

+-------+----------+

| price | count(*) |

+-------+----------+

| 306 | 7 |

| 400 | 13 |

...

| 229 | 9 |

| 595 | 10 |

+-------+----------+

10 rows in set (0.00 sec)

+----------+----------+

| brand_id | count(*) |

+----------+----------+

| 1 | 1013 |

| 10 | 998 |

| 5 | 1007 |

| 8 | 1033 |

| 7 | 965 |

+----------+----------+

5 rows in set (0.00 sec){

"took": 3,

"timed_out": false,

"hits": {

"total": 10000,

"hits": [

{

"_id": 1,

"_score": 1,

"_source": {

"price": 197,

"brand_id": 10,

"brand_name": "Brand Ten",

"categories": [

10

]

}

},

...

{

"_id": 5,

"_score": 1,

"_source": {

"price": 805,

"brand_id": 7,

"brand_name": "Brand Seven",

"categories": [

11,

12,

13

]

}

}

]

},

"aggregations": {

"group_property": {

"buckets": [

{

"key": 1000,

"doc_count": 11

},

{

"key": 999,

"doc_count": 12

},

...

{

"key": 991,

"doc_count": 7

}

]

},

"group_brand_id": {

"buckets": [

{

"key": 10,

"doc_count": 1019

},

{

"key": 9,

"doc_count": 954

},

{

"key": 8,

"doc_count": 1021

},

{

"key": 7,

"doc_count": 1011

},

{

"key": 6,

"doc_count": 997

}

]

}

}

}Array

(

[price] => Array

(

[buckets] => Array

(

[0] => Array

(

[key] => 1000

[doc_count] => 11

)

[1] => Array

(

[key] => 999

[doc_count] => 12

)

[2] => Array

(

[key] => 998

[doc_count] => 7

)

[3] => Array

(

[key] => 997

[doc_count] => 14

)

[4] => Array

(

[key] => 996

[doc_count] => 8

)

)

)

[group_brand_id] => Array

(

[buckets] => Array

(

[0] => Array

(

[key] => 10

[doc_count] => 1019

)

[1] => Array

(

[key] => 9

[doc_count] => 954

)

[2] => Array

(

[key] => 8

[doc_count] => 1021

)

[3] => Array

(

[key] => 7

[doc_count] => 1011

)

[4] => Array

(

[key] => 6

[doc_count] => 997

)

)

)

){'aggregations': {u'group_brand_id': {u'buckets': [{u'doc_count': 1019,

u'key': 10},

{u'doc_count': 954,

u'key': 9},

{u'doc_count': 1021,

u'key': 8},

{u'doc_count': 1011,

u'key': 7},

{u'doc_count': 997,

u'key': 6}]},

u'group_property': {u'buckets': [{u'doc_count': 11,

u'key': 1000},

{u'doc_count': 12,

u'key': 999},

{u'doc_count': 7,

u'key': 998},

{u'doc_count': 14,

u'key': 997},

{u'doc_count': 8,

u'key': 996}]}},

'hits': {'hits': [{u'_id': u'1',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [10],

u'price': 197,

u'property': u'Six',

u'title': u'Product Eight One'}},

{u'_id': u'2',

u'_score': 1,

u'_source': {u'brand_id': 6,

u'brand_name': u'Brand Six',

u'categories': [12, 13, 14],

u'price': 671,

u'property': u'Four',

u'title': u'Product Nine Seven'}},

{u'_id': u'3',

u'_score': 1,

u'_source': {u'brand_id': 3,

u'brand_name': u'Brand Three',

u'categories': [13, 14, 15],

u'price': 92,

u'property': u'Six',

u'title': u'Product Five Four'}},

{u'_id': u'4',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [11],

u'price': 713,

u'property': u'Five',

u'title': u'Product Eight Nine'}},

{u'_id': u'5',

u'_score': 1,

u'_source': {u'brand_id': 7,

u'brand_name': u'Brand Seven',

u'categories': [11, 12, 13],

u'price': 805,

u'property': u'Two',

u'title': u'Product Ten Three'}}],

'max_score': None,

'total': 10000},

'profile': None,

'timed_out': False,

'took': 4}{'aggregations': {u'group_brand_id': {u'buckets': [{u'doc_count': 1019,

u'key': 10},

{u'doc_count': 954,

u'key': 9},

{u'doc_count': 1021,

u'key': 8},

{u'doc_count': 1011,

u'key': 7},

{u'doc_count': 997,

u'key': 6}]},

u'group_property': {u'buckets': [{u'doc_count': 11,

u'key': 1000},

{u'doc_count': 12,

u'key': 999},

{u'doc_count': 7,

u'key': 998},

{u'doc_count': 14,

u'key': 997},

{u'doc_count': 8,

u'key': 996}]}},

'hits': {'hits': [{u'_id': u'1',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [10],

u'price': 197,

u'property': u'Six',

u'title': u'Product Eight One'}},

{u'_id': u'2',

u'_score': 1,

u'_source': {u'brand_id': 6,

u'brand_name': u'Brand Six',

u'categories': [12, 13, 14],

u'price': 671,

u'property': u'Four',

u'title': u'Product Nine Seven'}},

{u'_id': u'3',

u'_score': 1,

u'_source': {u'brand_id': 3,

u'brand_name': u'Brand Three',

u'categories': [13, 14, 15],

u'price': 92,

u'property': u'Six',

u'title': u'Product Five Four'}},

{u'_id': u'4',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [11],

u'price': 713,

u'property': u'Five',

u'title': u'Product Eight Nine'}},

{u'_id': u'5',

u'_score': 1,

u'_source': {u'brand_id': 7,

u'brand_name': u'Brand Seven',

u'categories': [11, 12, 13],

u'price': 805,

u'property': u'Two',

u'title': u'Product Ten Three'}}],

'max_score': None,

'total': 10000},

'profile': None,

'timed_out': False,

'took': 4}{"took":0,"timed_out":false,"hits":{"total":10000,"hits":[{"_id": 1,"_score":1,"_source":{"price":197,"brand_id":10,"brand_name":"Brand Ten","categories":[10],"title":"Product Eight One","property":"Six"}},{"_id": 2,"_score":1,"_source":{"price":671,"brand_id":6,"brand_name":"Brand Six","categories":[12,13,14],"title":"Product Nine Seven","property":"Four"}},{"_id": 3,"_score":1,"_source":{"price":92,"brand_id":3,"brand_name":"Brand Three","categories":[13,14,15],"title":"Product Five Four","property":"Six"}},{"_id": 4,"_score":1,"_source":{"price":713,"brand_id":10,"brand_name":"Brand Ten","categories":[11],"title":"Product Eight Nine","property":"Five"}},{"_id": 5,"_score":1,"_source":{"price":805,"brand_id":7,"brand_name":"Brand Seven","categories":[11,12,13],"title":"Product Ten Three","property":"Two"}}]}}class SearchResponse {

took: 0

timedOut: false

aggregations: {group_property={buckets=[{key=1000, doc_count=11}, {key=999, doc_count=12}, {key=998, doc_count=7}, {key=997, doc_count=14}, {key=996, doc_count=8}]}, group_brand_id={buckets=[{key=10, doc_count=1019}, {key=9, doc_count=954}, {key=8, doc_count=1021}, {key=7, doc_count=1011}, {key=6, doc_count=997}]}}

hits: class SearchResponseHits {

maxScore: null

total: 10000

hits: [{_id=1, _score=1, _source={price=197, brand_id=10, brand_name=Brand Ten, categories=[10], title=Product Eight One, property=Six}}, {_id=2, _score=1, _source={price=671, brand_id=6, brand_name=Brand Six, categories=[12, 13, 14], title=Product Nine Seven, property=Four}}, {_id=3, _score=1, _source={price=92, brand_id=3, brand_name=Brand Three, categories=[13, 14, 15], title=Product Five Four, property=Six}}, {_id=4, _score=1, _source={price=713, brand_id=10, brand_name=Brand Ten, categories=[11], title=Product Eight Nine, property=Five}}, {_id=5, _score=1, _source={price=805, brand_id=7, brand_name=Brand Seven, categories=[11, 12, 13], title=Product Ten Three, property=Two}}]

}

profile: null

}class SearchResponse {

took: 0

timedOut: false

aggregations: {group_property={buckets=[{key=1000, doc_count=11}, {key=999, doc_count=12}, {key=998, doc_count=7}, {key=997, doc_count=14}, {key=996, doc_count=8}]}, group_brand_id={buckets=[{key=10, doc_count=1019}, {key=9, doc_count=954}, {key=8, doc_count=1021}, {key=7, doc_count=1011}, {key=6, doc_count=997}]}}

hits: class SearchResponseHits {

maxScore: null

total: 10000

hits: [{_id=1, _score=1, _source={price=197, brand_id=10, brand_name=Brand Ten, categories=[10], title=Product Eight One, property=Six}}, {_id=2, _score=1, _source={price=671, brand_id=6, brand_name=Brand Six, categories=[12, 13, 14], title=Product Nine Seven, property=Four}}, {_id=3, _score=1, _source={price=92, brand_id=3, brand_name=Brand Three, categories=[13, 14, 15], title=Product Five Four, property=Six}}, {_id=4, _score=1, _source={price=713, brand_id=10, brand_name=Brand Ten, categories=[11], title=Product Eight Nine, property=Five}}, {_id=5, _score=1, _source={price=805, brand_id=7, brand_name=Brand Seven, categories=[11, 12, 13], title=Product Ten Three, property=Two}}]

}

profile: null

}class SearchResponse {

took: 0

timedOut: false

aggregations: {group_property={buckets=[{key=1000, doc_count=11}, {key=999, doc_count=12}, {key=998, doc_count=7}, {key=997, doc_count=14}, {key=996, doc_count=8}]}, group_brand_id={buckets=[{key=10, doc_count=1019}, {key=9, doc_count=954}, {key=8, doc_count=1021}, {key=7, doc_count=1011}, {key=6, doc_count=997}]}}

hits: class SearchResponseHits {

maxScore: null

total: 10000

hits: [{_id=1, _score=1, _source={price=197, brand_id=10, brand_name=Brand Ten, categories=[10], title=Product Eight One, property=Six}}, {_id=2, _score=1, _source={price=671, brand_id=6, brand_name=Brand Six, categories=[12, 13, 14], title=Product Nine Seven, property=Four}}, {_id=3, _score=1, _source={price=92, brand_id=3, brand_name=Brand Three, categories=[13, 14, 15], title=Product Five Four, property=Six}}, {_id=4, _score=1, _source={price=713, brand_id=10, brand_name=Brand Ten, categories=[11], title=Product Eight Nine, property=Five}}, {_id=5, _score=1, _source={price=805, brand_id=7, brand_name=Brand Seven, categories=[11, 12, 13], title=Product Ten Three, property=Two}}]

}

profile: null

}{

"took": 0,

"timed_out": false,

"hits": {

"total": 5,

"hits": [

{

"_id": 1,

"_score": 1,

"_source": {

"content": "Text 1",

"name": "Doc 1",

"cat": 1

}

},

...

{

"_id": 5,

"_score": 1,

"_source": {

"content": "Text 5",

"name": "Doc 5",

"cat": 4

}

}

]

},

"aggregations": {

"name_group": {

"buckets": [

{

"key": "Doc 1",

"doc_count": 1

},

...

{

"key": "Doc 5",

"doc_count": 1

}

]

},

"cat_group": {

"buckets": [

{

"key": 1,

"doc_count": 2

},

...

{

"key": 4,

"doc_count": 1

}

]

}

}

}{

"took": 0,

"timed_out": false,

"hits": {

"total": 5,

"hits": [

{

"_id": 1,

"_score": 1,

"_source": {

"content": "Text 1",

"name": "Doc 1",

"cat": 1

}

},

...

{

"_id": 5,

"_score": 1,

"_source": {

"content": "Text 5",

"name": "Doc 5",

"cat": 4

}

}

]

},

"aggregations": {

"name_group": {

"buckets": [

{

"key": "Doc 1",

"doc_count": 1

},

...

{

"key": "Doc 5",

"doc_count": 1

}

]

},

"cat_group": {

"buckets": [

{

"key": 1,

"doc_count": 2

},

...

{

"key": 4,

"doc_count": 1

}

]

}

}

}Data can be faceted by aggregating another attribute or expression. For example if the documents contain both the brand id and name, we can return in facet the brand names, but aggregate the brand ids. This can be done by using FACET {expr1} BY {expr2}

- SQL

- JSON

SELECT * FROM facetdemo FACET brand_name by brand_id;POST /sql -d "SELECT brand_name, brand_id FROM facetdemo FACET brand_name by brand_id"+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+

| id | price | brand_id | title | brand_name | property | j | categories |

+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+

| 1 | 306 | 1 | Product Ten Three | Brand One | Six_Ten | {"prop1":66,"prop2":91,"prop3":"One"} | 10,11 |

| 2 | 400 | 10 | Product Three One | Brand Ten | Four_Three | {"prop1":69,"prop2":19,"prop3":"One"} | 13,14 |

....

| 19 | 855 | 1 | Product Seven Two | Brand One | Eight_Seven | {"prop1":63,"prop2":78,"prop3":"One"} | 10,11,12 |

| 20 | 31 | 9 | Product Four One | Brand Nine | Ten_Four | {"prop1":79,"prop2":42,"prop3":"One"} | 12,13,14 |

+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+

20 rows in set (0.00 sec)

+-------------+----------+

| brand_name | count(*) |

+-------------+----------+

| Brand One | 1013 |

| Brand Ten | 998 |

| Brand Five | 1007 |

| Brand Nine | 944 |

| Brand Two | 990 |

| Brand Six | 1039 |

| Brand Three | 1016 |

| Brand Four | 994 |

| Brand Eight | 1033 |

| Brand Seven | 965 |

+-------------+----------+

10 rows in set (0.00 sec){

"took": 0,

"timed_out": false,

"hits": {

"total": 20,

"total_relation": "eq",

"hits": [

{

"_id": 1,

"_score": 1500,

"_source": {

"brand_name": "Brand One",

"brand_id": 1

}

},

{

"_id": 2,

"_score": 1500,

"_source": {

"brand_name": "Brand Ten",

"brand_id": 10

}

},

...

{

"_id": 20,

"_score": 1500,

"_source": {

"brand_name": "Brand Nine",

"brand_id": 9

}

},

]

},

"aggregations": {

"brand_name": {

"buckets": [

{

"key": "Brand One",

"doc_count": 1013

},

{

"key": "Brand Ten",

"doc_count": 998

},

...

{

"key": "Brand Seven",

"doc_count": 965

},

]

}

}

}If you need to remove duplicates from the buckets returned by FACET, you can use DISTINCT field_name, where field_name is the field by which you want to perform deduplication. It can also be id (which is the default) if you make a FACET query against a distributed table and are not sure whether you have unique ids in the tables (the tables should be local and have the same schema).

If you have multiple FACET declarations in your query, field_name should be the same in all of them.

DISTINCT returns an additional column count(distinct ...) before the column count(*), allowing you to obtain both results without needing to make another query.

- SQL

- JSON

SELECT brand_name, property FROM facetdemo FACET brand_name distinct property;POST /sql -d 'SELECT brand_name, property FROM facetdemo FACET brand_name distinct property'+-------------+----------+

| brand_name | property |

+-------------+----------+

| Brand Nine | Four |

| Brand Ten | Four |

| Brand One | Five |

| Brand Seven | Nine |

| Brand Seven | Seven |

| Brand Three | Seven |

| Brand Nine | Five |

| Brand Three | Eight |

| Brand Two | Eight |

| Brand Six | Eight |

| Brand Ten | Four |

| Brand Ten | Two |

| Brand Four | Ten |

| Brand One | Nine |

| Brand Four | Eight |

| Brand Nine | Seven |

| Brand Four | Five |

| Brand Three | Four |

| Brand Four | Two |

| Brand Four | Eight |

+-------------+----------+

20 rows in set (0.00 sec)

+-------------+--------------------------+----------+

| brand_name | count(distinct property) | count(*) |

+-------------+--------------------------+----------+

| Brand Nine | 3 | 3 |

| Brand Ten | 2 | 3 |

| Brand One | 2 | 2 |

| Brand Seven | 2 | 2 |

| Brand Three | 3 | 3 |

| Brand Two | 1 | 1 |

| Brand Six | 1 | 1 |

| Brand Four | 4 | 5 |

+-------------+--------------------------+----------+

8 rows in set (0.00 sec){

"took": 0,

"timed_out": false,

"hits": {

"total": 20,

"total_relation": "eq",

"hits": [

{

"_score": 1,

"_source": {

"brand_name": "Brand Nine",

"property": "Four"

}

},

{

"_score": 1,

"_source": {

"brand_name": "Brand Ten",

"property": "Four"

}

},

...

{

"_score": 1,

"_source": {

"brand_name": "Brand Four",

"property": "Eight"

}

}

]

},

"aggregations": {

"brand_name": {

"buckets": [

{

"key": "Brand Nine",

"doc_count": 3,

"count(distinct property)": 3

},

{

"key": "Brand Ten",

"doc_count": 3,

"count(distinct property)": 2

},

...

{

"key": "Brand Two",

"doc_count": 1,

"count(distinct property)": 1

},

{

"key": "Brand Six",

"doc_count": 1,

"count(distinct property)": 1

},

{

"key": "Brand Four",

"doc_count": 5,

"count(distinct property)": 4

}

]

}

}

}Facets can aggregate over expressions. A classic example is the segmentation of prices by specific ranges:

- SQL

- JSON

- PHP

- Python

- Python-asyncio

- Javascript

- Java

- C#

- Rust

- TypeScript

- Go

SELECT * FROM facetdemo FACET INTERVAL(price,200,400,600,800) AS price_range ;POST /search -d '

{

"table": "facetdemo",

"query":

{

"match_all": {}

},

"expressions":

{

"price_range": "INTERVAL(price,200,400,600,800)"

},

"aggs":

{

"group_property":

{

"terms":

{

"field": "price_range"

}

}

}

}$index->setName('facetdemo');

$search = $index->search('');

$search->limit(5);

$search->expression('price_range','INTERVAL(price,200,400,600,800)');

$search->facet('price_range','group_property');

$results = $search->get();

print_r($results->getFacets());res =searchApi.search({"table":"facetdemo","query":{"match_all":{}},"expressions":{"price_range":"INTERVAL(price,200,400,600,800)"},"aggs":{"group_property":{"terms":{"field":"price_range"}}}})res = await searchApi.search({"table":"facetdemo","query":{"match_all":{}},"expressions":{"price_range":"INTERVAL(price,200,400,600,800)"},"aggs":{"group_property":{"terms":{"field":"price_range"}}}})res = await searchApi.search({"table":"facetdemo","query":{"match_all":{}},"expressions":{"price_range":"INTERVAL(price,200,400,600,800)"},"aggs":{"group_property":{"terms":{"field":"price_range"}}}});searchRequest = new SearchRequest();

expressions = new HashMap<String,Object>(){{

put("price_range","INTERVAL(price,200,400,600,800)");

}};

searchRequest.setExpressions(expressions);

aggs = new HashMap<String,Object>(){{

put("group_property", new HashMap<String,Object>(){{

put("terms", new HashMap<String,Object>(){{

put("field","price_range");

}});

}});

}};

searchRequest.setIndex("facetdemo");

searchRequest.setLimit(5);

query = new HashMap<String,Object>();

query.put("match_all",null);

searchRequest.setQuery(query);

searchRequest.setAggs(aggs);

searchResponse = searchApi.search(searchRequest);var expr = new Dictionary<string, string> { {"price_range", "INTERVAL(price,200,400,600,800"} } ;

var agg = new Aggregation("group_property", "price_range");

object query = new { match_all=null };

var searchRequest = new SearchRequest("facetdemo", query);

searchRequest.Limit = 5;

searchRequest.Expressions = new List<Object> {expr};

searchRequest.Aggs = new List<Aggregation> {agg};

var searchResponse = searchApi.Search(searchRequest);let query = SearchQuery::new();

let aggTerms1 = AggTerms::new("price_range");

let agg1 = Aggregation {

terms: Some(Box::new(aggTerms1)),

..Default::default(),

};

let mut aggs = HashMap::new();

aggs.insert("group_property".to_string(), agg1);

let mut expr = HashMap::new();

expr.insert("price_range".to_string(), "INTERVAL(price,200,400,600,800");

let expressions: [HashMap; 1] = [expr];

let search_req = SearchRequest {

table: "facetdemo".to_string(),

query: Some(Box::new(query)),

expressions: serde_json::json!(expressions),

aggs: serde_json::json!(aggs),

limit: serde_json::json!(5),

..Default::default(),

};

let search_res = search_api.search(search_req).await;res = await searchApi.search({

index: 'test',

query: { match_all:{} },

expressions: { cat_range: "INTERVAL(cat,1,3)" }

aggs: {

expr_group: {

terms: { field : 'cat_range' }

}

}

});query := map[string]interface{} {}

searchRequest.SetQuery(query)

exprs := map[string]string{} { "cat_range": "INTERVAL(cat,1,3)" }

searchRequest.SetExpressions(exprs)

aggByExpr := manticoreclient.NewAggregation()

aggTerms := manticoreclient.NewAggregationTerms()

aggTerms.SetField("cat_range")

aggByExpr.SetTerms(aggTerms)

aggs := map[string]Aggregation{} { "expr_group": aggByExpr }

searchRequest.SetAggs(aggs)

res, _, _ := apiClient.SearchAPI.Search(context.Background()).SearchRequest(*searchRequest).Execute()+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+-------------+

| id | price | brand_id | title | brand_name | property | j | categories | price_range |

+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+-------------+

| 1 | 306 | 1 | Product Ten Three | Brand One | Six_Ten | {"prop1":66,"prop2":91,"prop3":"One"} | 10,11 | 1 |

...

+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+-------------+

20 rows in set (0.00 sec)

+-------------+----------+

| price_range | count(*) |

+-------------+----------+

| 0 | 1885 |

| 3 | 1973 |

| 4 | 2100 |

| 2 | 1999 |

| 1 | 2043 |

+-------------+----------+

5 rows in set (0.01 sec){

"took": 3,

"timed_out": false,

"hits": {

"total": 10000,

"hits": [

{

"_id": 1,

"_score": 1,

"_source": {

"price": 197,

"brand_id": 10,

"brand_name": "Brand Ten",

"categories": [

10

],

"price_range": 0

}

},

...

{

"_id": 20,

"_score": 1,

"_source": {

"price": 227,

"brand_id": 3,

"brand_name": "Brand Three",

"categories": [

12,

13

],

"price_range": 1

}

}

]

},

"aggregations": {

"group_property": {

"buckets": [

{

"key": 4,

"doc_count": 2100

},

{

"key": 3,

"doc_count": 1973

},

{

"key": 2,

"doc_count": 1999

},

{

"key": 1,

"doc_count": 2043

},

{

"key": 0,

"doc_count": 1885

}

]

}

}

}Array

(

[group_property] => Array

(

[buckets] => Array

(

[0] => Array

(

[key] => 4

[doc_count] => 2100

)

[1] => Array

(

[key] => 3

[doc_count] => 1973

)

[2] => Array

(

[key] => 2

[doc_count] => 1999

)

[3] => Array

(

[key] => 1

[doc_count] => 2043

)

[4] => Array

(

[key] => 0

[doc_count] => 1885

)

)

)

){'aggregations': {u'group_brand_id': {u'buckets': [{u'doc_count': 1019,

u'key': 10},

{u'doc_count': 954,

u'key': 9},

{u'doc_count': 1021,

u'key': 8},

{u'doc_count': 1011,

u'key': 7},

{u'doc_count': 997,

u'key': 6}]},

u'group_property': {u'buckets': [{u'doc_count': 11,

u'key': 1000},

{u'doc_count': 12,

u'key': 999},

{u'doc_count': 7,

u'key': 998},

{u'doc_count': 14,

u'key': 997},

{u'doc_count': 8,

u'key': 996}]}},

'hits': {'hits': [{u'_id': u'1',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [10],

u'price': 197,

u'property': u'Six',

u'title': u'Product Eight One'}},

{u'_id': u'2',

u'_score': 1,

u'_source': {u'brand_id': 6,

u'brand_name': u'Brand Six',

u'categories': [12, 13, 14],

u'price': 671,

u'property': u'Four',

u'title': u'Product Nine Seven'}},

{u'_id': u'3',

u'_score': 1,

u'_source': {u'brand_id': 3,

u'brand_name': u'Brand Three',

u'categories': [13, 14, 15],

u'price': 92,

u'property': u'Six',

u'title': u'Product Five Four'}},

{u'_id': u'4',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [11],

u'price': 713,

u'property': u'Five',

u'title': u'Product Eight Nine'}},

{u'_id': u'5',

u'_score': 1,

u'_source': {u'brand_id': 7,

u'brand_name': u'Brand Seven',

u'categories': [11, 12, 13],

u'price': 805,

u'property': u'Two',

u'title': u'Product Ten Three'}}],

'max_score': None,

'total': 10000},

'profile': None,

'timed_out': False,

'took': 0}{'aggregations': {u'group_brand_id': {u'buckets': [{u'doc_count': 1019,

u'key': 10},

{u'doc_count': 954,

u'key': 9},

{u'doc_count': 1021,

u'key': 8},

{u'doc_count': 1011,

u'key': 7},

{u'doc_count': 997,

u'key': 6}]},

u'group_property': {u'buckets': [{u'doc_count': 11,

u'key': 1000},

{u'doc_count': 12,

u'key': 999},

{u'doc_count': 7,

u'key': 998},

{u'doc_count': 14,

u'key': 997},

{u'doc_count': 8,

u'key': 996}]}},

'hits': {'hits': [{u'_id': u'1',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [10],

u'price': 197,

u'property': u'Six',

u'title': u'Product Eight One'}},

{u'_id': u'2',

u'_score': 1,

u'_source': {u'brand_id': 6,

u'brand_name': u'Brand Six',

u'categories': [12, 13, 14],

u'price': 671,

u'property': u'Four',

u'title': u'Product Nine Seven'}},

{u'_id': u'3',

u'_score': 1,

u'_source': {u'brand_id': 3,

u'brand_name': u'Brand Three',

u'categories': [13, 14, 15],

u'price': 92,

u'property': u'Six',

u'title': u'Product Five Four'}},

{u'_id': u'4',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [11],

u'price': 713,

u'property': u'Five',

u'title': u'Product Eight Nine'}},

{u'_id': u'5',

u'_score': 1,

u'_source': {u'brand_id': 7,

u'brand_name': u'Brand Seven',

u'categories': [11, 12, 13],

u'price': 805,

u'property': u'Two',

u'title': u'Product Ten Three'}}],

'max_score': None,

'total': 10000},

'profile': None,

'timed_out': False,

'took': 0}{"took":0,"timed_out":false,"hits":{"total":10000,"hits":[{"_id": 1,"_score":1,"_source":{"price":197,"brand_id":10,"brand_name":"Brand Ten","categories":[10],"title":"Product Eight One","property":"Six","price_range":0}},{"_id": 2,"_score":1,"_source":{"price":671,"brand_id":6,"brand_name":"Brand Six","categories":[12,13,14],"title":"Product Nine Seven","property":"Four","price_range":3}},{"_id": 3,"_score":1,"_source":{"price":92,"brand_id":3,"brand_name":"Brand Three","categories":[13,14,15],"title":"Product Five Four","property":"Six","price_range":0}},{"_id": 4,"_score":1,"_source":{"price":713,"brand_id":10,"brand_name":"Brand Ten","categories":[11],"title":"Product Eight Nine","property":"Five","price_range":3}},{"_id": 5,"_score":1,"_source":{"price":805,"brand_id":7,"brand_name":"Brand Seven","categories":[11,12,13],"title":"Product Ten Three","property":"Two","price_range":4}},{"_id": 6,"_score":1,"_source":{"price":420,"brand_id":2,"brand_name":"Brand Two","categories":[10,11],"title":"Product Two One","property":"Six","price_range":2}},{"_id": 7,"_score":1,"_source":{"price":412,"brand_id":9,"brand_name":"Brand Nine","categories":[10],"title":"Product Four Nine","property":"Eight","price_range":2}},{"_id": 8,"_score":1,"_source":{"price":300,"brand_id":9,"brand_name":"Brand Nine","categories":[13,14,15],"title":"Product Eight Four","property":"Five","price_range":1}},{"_id": 9,"_score":1,"_source":{"price":728,"brand_id":1,"brand_name":"Brand One","categories":[11],"title":"Product Nine Six","property":"Four","price_range":3}},{"_id": 10,"_score":1,"_source":{"price":622,"brand_id":3,"brand_name":"Brand Three","categories":[10,11],"title":"Product Six Seven","property":"Two","price_range":3}},{"_id": 11,"_score":1,"_source":{"price":462,"brand_id":5,"brand_name":"Brand Five","categories":[10,11],"title":"Product Ten Two","property":"Eight","price_range":2}},{"_id": 12,"_score":1,"_source":{"price":939,"brand_id":7,"brand_name":"Brand Seven","categories":[12,13],"title":"Product Nine Seven","property":"Six","price_range":4}},{"_id": 13,"_score":1,"_source":{"price":948,"brand_id":8,"brand_name":"Brand Eight","categories":[12],"title":"Product Ten One","property":"Six","price_range":4}},{"_id": 14,"_score":1,"_source":{"price":900,"brand_id":9,"brand_name":"Brand Nine","categories":[12,13,14],"title":"Product Ten Nine","property":"Three","price_range":4}},{"_id": 15,"_score":1,"_source":{"price":224,"brand_id":3,"brand_name":"Brand Three","categories":[13],"title":"Product Two Six","property":"Four","price_range":1}},{"_id": 16,"_score":1,"_source":{"price":713,"brand_id":10,"brand_name":"Brand Ten","categories":[12],"title":"Product Two Four","property":"Six","price_range":3}},{"_id": 17,"_score":1,"_source":{"price":510,"brand_id":2,"brand_name":"Brand Two","categories":[10],"title":"Product Ten Two","property":"Seven","price_range":2}},{"_id": 18,"_score":1,"_source":{"price":702,"brand_id":10,"brand_name":"Brand Ten","categories":[12,13],"title":"Product Nine One","property":"Three","price_range":3}},{"_id": 19,"_score":1,"_source":{"price":836,"brand_id":4,"brand_name":"Brand Four","categories":[10,11,12],"title":"Product Four Five","property":"Two","price_range":4}},{"_id": 20,"_score":1,"_source":{"price":227,"brand_id":3,"brand_name":"Brand Three","categories":[12,13],"title":"Product Three Four","property":"Ten","price_range":1}}]}}class SearchResponse {

took: 0

timedOut: false

aggregations: {group_property={buckets=[{key=4, doc_count=2100}, {key=3, doc_count=1973}, {key=2, doc_count=1999}, {key=1, doc_count=2043}, {key=0, doc_count=1885}]}}

hits: class SearchResponseHits {

maxScore: null

total: 10000

hits: [{_id=1, _score=1, _source={price=197, brand_id=10, brand_name=Brand Ten, categories=[10], title=Product Eight One, property=Six, price_range=0}}, {_id=2, _score=1, _source={price=671, brand_id=6, brand_name=Brand Six, categories=[12, 13, 14], title=Product Nine Seven, property=Four, price_range=3}}, {_id=3, _score=1, _source={price=92, brand_id=3, brand_name=Brand Three, categories=[13, 14, 15], title=Product Five Four, property=Six, price_range=0}}, {_id=4, _score=1, _source={price=713, brand_id=10, brand_name=Brand Ten, categories=[11], title=Product Eight Nine, property=Five, price_range=3}}, {_id=5, _score=1, _source={price=805, brand_id=7, brand_name=Brand Seven, categories=[11, 12, 13], title=Product Ten Three, property=Two, price_range=4}}]

}

profile: null

}class SearchResponse {

took: 0

timedOut: false

aggregations: {group_property={buckets=[{key=4, doc_count=2100}, {key=3, doc_count=1973}, {key=2, doc_count=1999}, {key=1, doc_count=2043}, {key=0, doc_count=1885}]}}

hits: class SearchResponseHits {

maxScore: null

total: 10000

hits: [{_id=1, _score=1, _source={price=197, brand_id=10, brand_name=Brand Ten, categories=[10], title=Product Eight One, property=Six, price_range=0}}, {_id=2, _score=1, _source={price=671, brand_id=6, brand_name=Brand Six, categories=[12, 13, 14], title=Product Nine Seven, property=Four, price_range=3}}, {_id=3, _score=1, _source={price=92, brand_id=3, brand_name=Brand Three, categories=[13, 14, 15], title=Product Five Four, property=Six, price_range=0}}, {_id=4, _score=1, _source={price=713, brand_id=10, brand_name=Brand Ten, categories=[11], title=Product Eight Nine, property=Five, price_range=3}}, {_id=5, _score=1, _source={price=805, brand_id=7, brand_name=Brand Seven, categories=[11, 12, 13], title=Product Ten Three, property=Two, price_range=4}}]

}

profile: null

}class SearchResponse {

took: 0

timedOut: false

aggregations: {group_property={buckets=[{key=4, doc_count=2100}, {key=3, doc_count=1973}, {key=2, doc_count=1999}, {key=1, doc_count=2043}, {key=0, doc_count=1885}]}}

hits: class SearchResponseHits {

maxScore: null

total: 10000

hits: [{_id=1, _score=1, _source={price=197, brand_id=10, brand_name=Brand Ten, categories=[10], title=Product Eight One, property=Six, price_range=0}}, {_id=2, _score=1, _source={price=671, brand_id=6, brand_name=Brand Six, categories=[12, 13, 14], title=Product Nine Seven, property=Four, price_range=3}}, {_id=3, _score=1, _source={price=92, brand_id=3, brand_name=Brand Three, categories=[13, 14, 15], title=Product Five Four, property=Six, price_range=0}}, {_id=4, _score=1, _source={price=713, brand_id=10, brand_name=Brand Ten, categories=[11], title=Product Eight Nine, property=Five, price_range=3}}, {_id=5, _score=1, _source={price=805, brand_id=7, brand_name=Brand Seven, categories=[11, 12, 13], title=Product Ten Three, property=Two, price_range=4}}]

}

profile: null

}{

"took": 0,

"timed_out": false,

"hits": {

"total": 5,

"hits": [

{

"_id": 1,

"_score": 1,

"_source": {

"content": "Text 1",

"name": "Doc 1",

"cat": 1,

"cat_range": 1

}

},

...

{

"_id": 5,

"_score": 1,

"_source": {

"content": "Text 5",

"name": "Doc 5",

"cat": 4,

"cat_range": 2,

}

}

]

},

"aggregations": {

"expr_group": {

"buckets": [

{

"key": 0,

"doc_count": 0

},

{

"key": 1,

"doc_count": 3

},

{

"key": 2,

"doc_count": 2

}

]

}

}

}{

"took": 0,

"timed_out": false,

"hits": {

"total": 5,

"hits": [

{

"_id": 1,

"_score": 1,

"_source": {

"content": "Text 1",

"name": "Doc 1",

"cat": 1,

"cat_range": 1

}

},

...

{

"_id": 5,

"_score": 1,

"_source": {

"content": "Text 5",

"name": "Doc 5",

"cat": 4,

"cat_range": 2

}

}

]

},

"aggregations": {

"expr_group": {

"buckets": [

{

"key": 0,

"doc_count": 0

},

{

"key": 1,

"doc_count": 3

},

{

"key": 2,

"doc_count": 2

}

]

}

}

}Facets can aggregate over multi-level grouping, with the result set being the same as if the query performed a multi-level grouping:

- SQL

- JSON

SELECT *,INTERVAL(price,200,400,600,800) AS price_range FROM facetdemo

FACET price_range AS price_range,brand_name ORDER BY brand_name asc;POST /sql?mode=raw -d "SELECT brand_name,INTERVAL(price,200,400,600,800) AS price_range FROM facetdemo FACET price_range AS price_range,brand_name ORDER BY brand_name asc"+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+-------------+

| id | price | brand_id | title | brand_name | property | j | categories | price_range |

+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+-------------+

| 1 | 306 | 1 | Product Ten Three | Brand One | Six_Ten | {"prop1":66,"prop2":91,"prop3":"One"} | 10,11 | 1 |

...

+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+-------------+

20 rows in set (0.00 sec)

+--------------+-------------+----------+

| fprice_range | brand_name | count(*) |

+--------------+-------------+----------+

| 1 | Brand Eight | 197 |

| 4 | Brand Eight | 235 |

| 3 | Brand Eight | 203 |

| 2 | Brand Eight | 201 |

| 0 | Brand Eight | 197 |

| 4 | Brand Five | 230 |

| 2 | Brand Five | 197 |

| 1 | Brand Five | 204 |

| 3 | Brand Five | 193 |

| 0 | Brand Five | 183 |

| 1 | Brand Four | 195 |

...[

{

"columns": [

{

"brand_name": {

"type": "string"

}

},

{

"price_range": {

"type": "long"

}

}

],

"data": [

{

"brand_name": "Brand One",

"price_range": 1

},

...

],

"total": 20,

"error": "",

"warning": ""

},

{

"columns": [

{

"fprice_range": {

"type": "long"

}

},

{

"brand_name": {

"type": "string"

}

},

{

"count(*)": {

"type": "long long"

}

}

],

"data": [

{

"fprice_range": 1,

"brand_name": "Brand Eight",

"count(*)": 197

},

{

"fprice_range": 4,

"brand_name": "Brand Eight",

"count(*)": 235

},

...

{

"fprice_range": 0,

"brand_name": "Brand Five",

"count(*)": 183

},

{

"fprice_range": 1,

"brand_name": "Brand Four",

"count(*)": 195

}

],

"total": 10,

"error": "",

"warning": ""

}

]Facets can aggregate over histogram values by constructing fixed-size buckets over the values. The key function is:

key_of_the_bucket = interval + offset * floor ( ( value - offset ) / interval )The histogram argument interval must be positive, and the histogram argument offset must be positive and less than interval. By default, the buckets are returned as an array. The histogram argument keyed makes the response a dictionary with the bucket keys.

- SQL

- JSON

- JSON 2

SELECT COUNT(*), HISTOGRAM(price, {hist_interval=100}) as price_range FROM facets GROUP BY price_range ORDER BY price_range ASC;POST /search -d '

{

"size": 0,

"table": "facets",

"aggs": {

"price_range": {

"histogram": {

"field": "price",

"interval": 300

}

}

}

}'POST /search -d '

{

"size": 0,

"table": "facets",

"aggs": {

"price_range": {

"histogram": {

"field": "price",

"interval": 300,

"keyed": true

}

}

}

}'+----------+-------------+

| count(*) | price_range |

+----------+-------------+

| 5 | 0 |

| 5 | 100 |

| 1 | 300 |

| 4 | 400 |

| 1 | 500 |

| 3 | 700 |

| 1 | 900 |

+----------+-------------+{

"took": 0,

"timed_out": false,

"hits": {

"total": 20,

"total_relation": "eq",

"hits": []

},

"aggregations": {

"price_range": {

"buckets": [

{

"key": 0,

"doc_count": 10

},

{

"key": 300,

"doc_count": 6

},

{

"key": 600,

"doc_count": 3

},

{

"key": 900,

"doc_count": 1

}

]

}

}

}{

"took": 0,

"timed_out": false,

"hits": {

"total": 20,

"total_relation": "eq",

"hits": []

},

"aggregations": {

"price_range": {

"buckets": {

"0": {

"key": 0,

"doc_count": 10

},

"300": {

"key": 300,

"doc_count": 6

},

"600": {

"key": 600,

"doc_count": 3

},

"900": {

"key": 900,

"doc_count": 1

}

}

}

}

}Facets can aggregate over histogram date values, which is similar to the normal histogram. The difference is that the interval is specified using a date or time expression. Such expressions require special support because the intervals are not always of fixed length. Values are rounded to the closest bucket using the following key function:

key_of_the_bucket = interval * floor ( value / interval )The histogram parameter calendar_interval understands months to have different amounts of days.

Unlike calendar_interval, the fixed_interval parameter uses a fixed number of units and does not deviate, regardless of where it falls on the calendar. However fixed_interval cannot process units such as months because a month is not a fixed quantity. Attempting to specify units like weeks or months for fixed_interval will result in an error.

The accepted intervals are described in the date_histogram expression. By default, the buckets are returned as an array. The histogram argument keyed makes the response a dictionary with the bucket keys.

In JSON queries, date_histogram also supports time_zone and offset with calendar_interval:

time_zonechanges the timezone used to round calendar buckets and to formatkey_as_string. It must be an IANA timezone name supported by the server, for exampleAsia/Novosibirsk. Numeric UTC offsets such as+03:00are not supported.offsetshifts calendar bucket boundaries by a fixed amount before rounding. It can be a fixed-interval string using the same units asfixed_interval, for example3h, or an integer number of seconds, for example10800. The value can be prefixed with+or-.

time_zone and offset are not supported with fixed_interval.

- SQL

- JSON

SELECT count(*), DATE_HISTOGRAM(tm, {calendar_interval='month'}) AS months FROM idx_dates GROUP BY months ORDER BY months ASCPOST /search -d '

{

"table": "idx_dates",

"size": 0,

"aggs": {

"months": {

"date_histogram": {

"field": "tm",

"keyed": true,

"calendar_interval": "month"

}

}

}

}'+----------+------------+

| count(*) | months |

+----------+------------+

| 442 | 1485907200 |

| 744 | 1488326400 |

| 720 | 1491004800 |

| 230 | 1493596800 |

+----------+------------+{

"timed_out": false,

"hits": {

"total": 2136,

"total_relation": "eq",

"hits": []

},

"aggregations": {

"months": {

"buckets": {

"2017-02-01T00:00:00": {

"key": 1485907200,

"key_as_string": "2017-02-01T00:00:00",

"doc_count": 442

},

"2017-03-01T00:00:00": {

"key": 1488326400,

"key_as_string": "2017-03-01T00:00:00",

"doc_count": 744

},

"2017-04-01T00:00:00": {

"key": 1491004800,

"key_as_string": "2017-04-01T00:00:00",

"doc_count": 720

},

"2017-05-01T00:00:00": {

"key": 1493596800,

"key_as_string": "2017-05-01T00:00:00",

"doc_count": 230

}

}

}

}

}Facets can aggregate over a set of ranges. The values are checked against the bucket range, where each bucket includes the from value and excludes the to value from the range.

Setting the keyed property to true makes the response a dictionary with the bucket keys rather than an array.

- SQL

- JSON

- JSON 2

SELECT COUNT(*), RANGE(price, {range_to=150},{range_from=150,range_to=300},{range_from=300}) price_range FROM facets GROUP BY price_range ORDER BY price_range ASC;POST /search -d '

{

"size": 0,

"table": "facets",

"aggs": {

"price_range": {

"range": {

"field": "price",

"ranges": [

{

"to": 99

},

{

"from": 99,

"to": 550

},

{

"from": 550

}

]

}

}

}

}'POST /search -d '

{

"size":0,

"table":"facets",

"aggs":{

"price_range":{

"range":{

"field":"price",

"keyed":true,

"ranges":[

{

"from":100,

"to":399

},

{

"from":399

}

]

}

}

}

}'+----------+-------------+

| count(*) | price_range |

+----------+-------------+

| 8 | 0 |

| 2 | 1 |

| 10 | 2 |

+----------+-------------+{

"took": 0,

"timed_out": false,

"hits": {

"total": 20,

"total_relation": "eq",

"hits": []

},

"aggregations": {

"price_range": {

"buckets": [

{

"key": "*-99",

"to": 99,

"doc_count": 5

},

{

"key": "99-550",

"from": 99,

"to": 550,

"doc_count": 11

},

{

"key": "550-*",

"from": 550,

"doc_count": 4

}

]

}

}

}{

"took": 0,

"timed_out": false,

"hits": {

"total": 20,

"total_relation": "eq",

"hits": []

},

"aggregations": {

"price_range": {

"buckets": {

"100-399": {

"from": 100,

"to": 399,

"doc_count": 6

},

"399-*": {

"from": 399,

"doc_count": 9

}

}

}

}

}Facets can aggregate over a set of date ranges, which is similar to the normal range. The difference is that the from and to values can be expressed in Date math expressions. This aggregation includes the from value and excludes the to value for each range. Setting the keyed property to true makes the response a dictionary with the bucket keys rather than an array.

- SQL

- JSON

SELECT COUNT(*), DATE_RANGE(tm, {range_to='2017||+2M/M'},{range_from='2017||+2M/M',range_to='2017||+5M/M'},{range_from='2017||+5M/M'}) AS points FROM idx_dates GROUP BY points ORDER BY points ASC;POST /search -d '

{

"table": "idx_dates",

"size": 0,

"aggs": {

"points": {

"date_range": {

"field": "tm",

"keyed": true,

"ranges": [

{

"to": "2017||+2M/M"

},

{

"from": "2017||+2M/M",

"to": "2017||+4M/M"

},

{

"from": "2017||+4M/M",

"to": "2017||+5M/M"

},

{

"from": "2017||+5M/M"

}

]

}

}

}

}'+----------+--------+

| count(*) | points |

+----------+--------+

| 442 | 0 |

| 1464 | 1 |

| 230 | 2 |

+----------+--------+{

"timed_out": false,

"hits": {

"total": 2136,

"total_relation": "eq",

"hits": []

},

"aggregations": {

"points": {

"buckets": {

"*-2017-03-01T00:00:00": {

"to": "2017-03-01T00:00:00",

"doc_count": 442

},

"2017-03-01T00:00:00-2017-04-01T00:00:00": {

"from": "2017-03-01T00:00:00",

"to": "2017-04-01T00:00:00",

"doc_count": 744

},

"2017-04-01T00:00:00-2017-05-01T00:00:00": {

"from": "2017-04-01T00:00:00",

"to": "2017-05-01T00:00:00",

"doc_count": 720

},

"2017-05-01T00:00:00-*": {

"from": "2017-05-01T00:00:00",

"doc_count": 230

}

}

}

}

}Facets support the ORDER BY clause just like a standard query. Each facet can have its own ordering, and the facet ordering doesn't affect the main result set's ordering, which is determined by the main query's ORDER BY. Sorting can be done on attribute name, count (using COUNT(*), COUNT(DISTINCT attribute_name)), or the special FACET() function, which provides the aggregated data values. By default, a query with ORDER BY COUNT(*) will sort in descending order.

- SQL

- JSON

SELECT * FROM facetdemo

FACET brand_name BY brand_id ORDER BY FACET() ASC

FACET brand_name BY brand_id ORDER BY brand_name ASC

FACET brand_name BY brand_id order BY COUNT(*) DESC;

FACET brand_name BY brand_id order BY COUNT(*);POST /search -d '

{

"table":"table_name",

"aggs":{

"group_property":{

"terms":{

"field":"a"

},

"sort":[

{

"count(*)":{

"order":"desc"

}

}

]

}

}

}'+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+

| id | price | brand_id | title | brand_name | property | j | categories |

+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+

| 1 | 306 | 1 | Product Ten Three | Brand One | Six_Ten | {"prop1":66,"prop2":91,"prop3":"One"} | 10,11 |

...

| 20 | 31 | 9 | Product Four One | Brand Nine | Ten_Four | {"prop1":79,"prop2":42,"prop3":"One"} | 12,13,14 |

+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+

20 rows in set (0.01 sec)

+-------------+----------+

| brand_name | count(*) |

+-------------+----------+

| Brand One | 1013 |

| Brand Two | 990 |

| Brand Three | 1016 |

| Brand Four | 994 |

| Brand Five | 1007 |

| Brand Six | 1039 |

| Brand Seven | 965 |

| Brand Eight | 1033 |

| Brand Nine | 944 |

| Brand Ten | 998 |

+-------------+----------+

10 rows in set (0.01 sec)

+-------------+----------+

| brand_name | count(*) |

+-------------+----------+

| Brand Eight | 1033 |

| Brand Five | 1007 |

| Brand Four | 994 |

| Brand Nine | 944 |

| Brand One | 1013 |

| Brand Seven | 965 |

| Brand Six | 1039 |

| Brand Ten | 998 |

| Brand Three | 1016 |

| Brand Two | 990 |

+-------------+----------+

10 rows in set (0.01 sec)

+-------------+----------+

| brand_name | count(*) |

+-------------+----------+

| Brand Six | 1039 |

| Brand Eight | 1033 |

| Brand Three | 1016 |

| Brand One | 1013 |

| Brand Five | 1007 |

| Brand Ten | 998 |

| Brand Four | 994 |

| Brand Two | 990 |

| Brand Seven | 965 |

| Brand Nine | 944 |

+-------------+----------+

10 rows in set (0.01 sec){

"took": 0,

"timed_out": false,

"hits": {

"total": 6,

"total_relation": "eq",

"hits": [

{

"_id": 1515697460415037554,

"_score": 1,

"_source": {

"a": 1

}

},

{

"_id": 1515697460415037555,

"_score": 1,

"_source": {

"a": 2

}

},

{

"_id": 1515697460415037556,

"_score": 1,

"_source": {

"a": 2

}

},

{

"_id": 1515697460415037557,

"_score": 1,

"_source": {

"a": 3

}

},

{

"_id": 1515697460415037558,

"_score": 1,

"_source": {

"a": 3

}

},

{

"_id": 1515697460415037559,

"_score": 1,

"_source": {

"a": 3

}

}

]

},

"aggregations": {

"group_property": {

"buckets": [

{

"key": 3,

"doc_count": 3

},

{

"key": 2,

"doc_count": 2

},

{

"key": 1,

"doc_count": 1

}

]

}

}

}Before counting buckets for a facet, Manticore first decides which filters from the main query should be applied to that facet.

Built-in modes:

strict- apply all filters from the main query and keep regular facet output

auto- apply all filters from the main query except filters on this same facet

- add a

statusmarker; selected buckets areselected, sibling buckets areavailable

max- count buckets from the broad base query and add a

statusmarker for each bucket

- count buckets from the broad base query and add a

Manual overrides:

all filters(SQL only)- apply all main-query filters to this facet

filters- apply only the listed main-query filters to this facet

exclude_filters- apply all main-query filters except the listed ones to this facet

Short version:

strict= apply everythingauto= apply everything except this facet's own filters +statusmax= broad base-query counts +statusall filters(SQL only) = apply everythingfilters= apply only these filtersexclude_filters= apply everything except these filters

Performance note:

maxis the most expensive facet mode because it has to collect broad facet counts and strict/current availability metadata- on large datasets or queries with many facets,

maxcan be much slower thanstrictorauto - use

autowhen the UI needs selectable buckets from the current filter scope, andmaxwhen it also needs broad bucket lists with unavailable values

Example

If the main query has:

brand='nike'color='red'size='small'

and we calculate FACET color, then:

strict- apply

brand + color + size

- apply

auto- apply

brand + size - and return selected color buckets with

status=selectedand sibling color buckets withstatus=available

- apply

max- apply the broad base query without

brand,color, orsize - and return color buckets with

status

- apply the broad base query without

filters=["brand"]- apply only

brand

- apply only

exclude_filters=["size"]- apply

brand + color

- apply

- SQL

- JSON

SELECT id

FROM products

WHERE MATCH('sneakers') AND color_id=1 AND size_id=42 AND brand_id=7

OPTION facet_filter_mode='max'

FACET color_id ALL FILTERS

FACET size_id

FACET sku FILTERS color_id, size_id

FACET brand_id EXCLUDE FILTERS color_id;The per-facet clauses mean:

ALL FILTERS— apply all main-query filters to this facetFILTERS color_id, size_id— apply onlycolor_idandsize_idfilters to this facetEXCLUDE FILTERS color_id— apply all main-query filters exceptcolor_idto this facet

These clauses override the filter scope that would otherwise come from facet_filter_mode or MODE. For example, FACET color_id ALL FILTERS MODE max still emits status, but its counts use all main-query filters instead of the broad default max scope.

In auto and max modes SQL facet results add a status column. selected means the bucket value is already present in a same-facet value filter. available means selecting the bucket can produce results; this includes sibling values that expand an existing same-facet filter. In max mode, unavailable means the bucket exists in the broad count scope but selecting it would produce no results. max is the most expensive mode, so on large datasets or facet-heavy queries you should enable it only when you need broad buckets with unavailable values.

For example, with size='small' and facet_filter_mode='max', a FACET size result can look like this. The large bucket is available because selecting it would expand the same facet filter to size IN ('small','large'):

POST /search -d '

{

"table": "products",

"query": {

"bool": {

"must": [

{ "equals": { "color_id": 1 } },

{ "equals": { "size_id": 42 } },

{ "equals": { "brand_id": 7 } }

]

}

},

"facet_filter_mode": "auto",

"aggs": {

"colors": {

"terms": { "field": "color_id" },

"mode": "strict"

},

"sku": {

"terms": { "field": "sku" },

"filters": ["color_id", "size_id"]

},

"brands": {

"terms": { "field": "brand_id" },

"exclude_filters": ["color_id"]

}

}

}'Notes:

- in SQL,

MODEoverrides the query-levelfacet_filter_modefor one facet - in JSON,

modeorfilter_modeoverrides the top-levelfacet_filter_modefor one aggregation - selected values are reported as

status=selectedfor explicit value filters such as=andIN. - unsupported same-field filters such as ranges do not currently participate in selected-value detection.

- facet-local filter scope supports conjunction-only attribute filters. Complex boolean filter trees are not rewritten per facet.

+-------+----------+-------------+

| size | count(*) | status |

+-------+----------+-------------+

| small | 1 | selected |

| large | 1 | available |

+-------+----------+-------------+A bucket from another facet can be unavailable when it is present in the broad max counts but has no rows under the current strict filter set.

"aggregations": {

"sizes": {

"buckets": [

{ "key": "small", "doc_count": 1, "status": "selected" },

{ "key": "large", "doc_count": 1, "status": "available" }

]

}

}And override it per aggregation when needed:

By default, each facet result set is limited to 20 values. The number of facet values can be controlled with the LIMIT clause individually for each facet by providing either a number of values to return in the format LIMIT count or with an offset as LIMIT offset, count.

The maximum facet values that can be returned is limited by the query's max_matches setting. If you want to implement dynamic max_matches (limiting max_matches to offset + per page for better performance), it must be taken into account that a too low max_matches value can affect the number of facet values. In this case, a minimum max_matches value should be used that is sufficient to cover the number of facet values.

- SQL

- JSON

- PHP

- Python

- Python-asyncio

- Javascript

- Java

- C#

- Rust

- TypeScript

- Go

SELECT * FROM facetdemo

FACET brand_name BY brand_id ORDER BY FACET() ASC LIMIT 0,1

FACET brand_name BY brand_id ORDER BY brand_name ASC LIMIT 2,4

FACET brand_name BY brand_id order BY COUNT(*) DESC LIMIT 4;POST /search -d '

{

"table" : "facetdemo",

"query" : {"match_all" : {} },

"limit": 5,

"aggs" :

{

"group_property" :

{

"terms" :

{

"field":"price",

"size":1,

}

},

"group_brand_id" :

{

"terms" :

{

"field":"brand_id",

"size":3

}

}

}

}

'$index->setName('facetdemo');

$search = $index->search('');

$search->limit(5);

$search->facet('price','price',1);

$search->facet('brand_id','group_brand_id',3);

$results = $search->get();

print_r($results->getFacets());res =searchApi.search({"table":"facetdemo","query":{"match_all":{}},"limit":5,"aggs":{"group_property":{"terms":{"field":"price","size":1,}},"group_brand_id":{"terms":{"field":"brand_id","size":3}}}})res = await searchApi.search({"table":"facetdemo","query":{"match_all":{}},"limit":5,"aggs":{"group_property":{"terms":{"field":"price","size":1,}},"group_brand_id":{"terms":{"field":"brand_id","size":3}}}})res = await searchApi.search({"table":"facetdemo","query":{"match_all":{}},"limit":5,"aggs":{"group_property":{"terms":{"field":"price","size":1,}},"group_brand_id":{"terms":{"field":"brand_id","size":3}}}});searchRequest = new SearchRequest();

aggs = new HashMap<String,Object>(){{

put("group_property", new HashMap<String,Object>(){{

put("terms", new HashMap<String,Object>(){{

put("field","price");

put("size",1);

}});

}});

put("group_brand_id", new HashMap<String,Object>(){{

put("terms", new HashMap<String,Object>(){{

put("field","brand_id");

put("size",3);

}});

}});

}};

searchRequest.setIndex("facetdemo");

searchRequest.setLimit(5);

query = new HashMap<String,Object>();

query.put("match_all",null);

searchRequest.setQuery(query);

searchRequest.setAggs(aggs);

searchResponse = searchApi.search(searchRequest);var agg1 = new Aggregation("group_property", "price");

agg1.Size = 1;

var agg2 = new Aggregation("group_brand_id", "brand_id");

agg2.Size = 3;

agg2.Size = 100;

object query = new { match_all=null };

var searchRequest = new SearchRequest("facetdemo", query);

searchRequest.Aggs = new List<Aggregation> {agg1, agg2};

var searchResponse = searchApi.Search(searchRequest);let query = SearchQuery::new();

let aggTerms1 = AggTerms {

field: "price".to_string(),

size: Some(1),

};

let agg1 = Aggregation {

terms: Some(Box::new(aggTerms1)),

..Default::default(),

};

let aggTerms1 = AggTerms {

field: "brand_id".to_string(),

size: Some(3),

};

let agg2 = Aggregation {

terms: Some(Box::new(aggTerms2)),

..Default::default(),

};

let mut aggs = HashMap::new();

aggs.insert("group_property".to_string(), agg1);

aggs.insert("group_brand_id".to_string(), agg2);

let search_req = SearchRequest {

table: "facetdemo".to_string(),

query: Some(Box::new(query)),

aggs: serde_json::json!(aggs),

limit: serde_json::json!(5),

..Default::default(),

};

let search_res = search_api.search(search_req).await;res = await searchApi.search({

index: 'test',

query: { match_all:{} },

aggs: {

name_group: {

terms: { field : 'name', size: 1 }

},

cat_group: {

terms: { field: 'cat' }

}

}

});query := map[string]interface{} {}

searchRequest.SetQuery(query)

aggByName := manticoreclient.NewAggregation()

aggTerms := manticoreclient.NewAggregationTerms()

aggTerms.SetField("name")

aggByName.SetTerms(aggTerms)

aggByName.SetSize(1)

aggByCat := manticoreclient.NewAggregation()

aggTerms.SetField("cat")

aggByCat.SetTerms(aggTerms)

aggs := map[string]Aggregation{} { "name_group": aggByName, "cat_group": aggByCat }

searchRequest.SetAggs(aggs)

res, _, _ := apiClient.SearchAPI.Search(context.Background()).SearchRequest(*searchRequest).Execute()+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+

| id | price | brand_id | title | brand_name | property | j | categories |

+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+

| 1 | 306 | 1 | Product Ten Three | Brand One | Six_Ten | {"prop1":66,"prop2":91,"prop3":"One"} | 10,11 |

...

| 20 | 31 | 9 | Product Four One | Brand Nine | Ten_Four | {"prop1":79,"prop2":42,"prop3":"One"} | 12,13,14 |

+------+-------+----------+---------------------+-------------+-------------+---------------------------------------+------------+

20 rows in set (0.01 sec)

+-------------+----------+

| brand_name | count(*) |

+-------------+----------+

| Brand One | 1013 |

+-------------+----------+

1 rows in set (0.01 sec)

+-------------+----------+

| brand_name | count(*) |

+-------------+----------+

| Brand Four | 994 |

| Brand Nine | 944 |

| Brand One | 1013 |

| Brand Seven | 965 |

+-------------+----------+

4 rows in set (0.01 sec)

+-------------+----------+

| brand_name | count(*) |

+-------------+----------+

| Brand Six | 1039 |

| Brand Eight | 1033 |

| Brand Three | 1016 |

+-------------+----------+

3 rows in set (0.01 sec){

"took": 3,

"timed_out": false,

"hits": {

"total": 10000,

"hits": [

{

"_id": 1,

"_score": 1,

"_source": {

"price": 197,

"brand_id": 10,

"brand_name": "Brand Ten",

"categories": [

10

]

}

},

...

{

"_id": 5,

"_score": 1,

"_source": {

"price": 805,

"brand_id": 7,

"brand_name": "Brand Seven",

"categories": [

11,

12,

13

]

}

}

]

},

"aggregations": {

"group_property": {

"buckets": [

{

"key": 1000,

"doc_count": 11

}

]

},

"group_brand_id": {

"buckets": [

{

"key": 10,

"doc_count": 1019

},

{

"key": 9,

"doc_count": 954

},

{

"key": 8,

"doc_count": 1021

}

]

}

}

}Array

(

[price] => Array

(

[buckets] => Array

(

[0] => Array

(

[key] => 1000

[doc_count] => 11

)

)

)

[group_brand_id] => Array

(

[buckets] => Array

(

[0] => Array

(

[key] => 10

[doc_count] => 1019

)

[1] => Array

(

[key] => 9

[doc_count] => 954

)

[2] => Array

(

[key] => 8

[doc_count] => 1021

)

)

)

){'aggregations': {u'group_brand_id': {u'buckets': [{u'doc_count': 1019,

u'key': 10},

{u'doc_count': 954,

u'key': 9},

{u'doc_count': 1021,

u'key': 8}]},

u'group_property': {u'buckets': [{u'doc_count': 11,

u'key': 1000}]}},

'hits': {'hits': [{u'_id': u'1',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [10],

u'price': 197,

u'property': u'Six',

u'title': u'Product Eight One'}},

{u'_id': u'2',

u'_score': 1,

u'_source': {u'brand_id': 6,

u'brand_name': u'Brand Six',

u'categories': [12, 13, 14],

u'price': 671,

u'property': u'Four',

u'title': u'Product Nine Seven'}},

{u'_id': u'3',

u'_score': 1,

u'_source': {u'brand_id': 3,

u'brand_name': u'Brand Three',

u'categories': [13, 14, 15],

u'price': 92,

u'property': u'Six',

u'title': u'Product Five Four'}},

{u'_id': u'4',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [11],

u'price': 713,

u'property': u'Five',

u'title': u'Product Eight Nine'}},

{u'_id': u'5',

u'_score': 1,

u'_source': {u'brand_id': 7,

u'brand_name': u'Brand Seven',

u'categories': [11, 12, 13],

u'price': 805,

u'property': u'Two',

u'title': u'Product Ten Three'}}],

'max_score': None,

'total': 10000},

'profile': None,

'timed_out': False,

'took': 0}{'aggregations': {u'group_brand_id': {u'buckets': [{u'doc_count': 1019,

u'key': 10},

{u'doc_count': 954,

u'key': 9},

{u'doc_count': 1021,

u'key': 8}]},

u'group_property': {u'buckets': [{u'doc_count': 11,

u'key': 1000}]}},

'hits': {'hits': [{u'_id': u'1',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [10],

u'price': 197,

u'property': u'Six',

u'title': u'Product Eight One'}},

{u'_id': u'2',

u'_score': 1,

u'_source': {u'brand_id': 6,

u'brand_name': u'Brand Six',

u'categories': [12, 13, 14],

u'price': 671,

u'property': u'Four',

u'title': u'Product Nine Seven'}},

{u'_id': u'3',

u'_score': 1,

u'_source': {u'brand_id': 3,

u'brand_name': u'Brand Three',

u'categories': [13, 14, 15],

u'price': 92,

u'property': u'Six',

u'title': u'Product Five Four'}},

{u'_id': u'4',

u'_score': 1,

u'_source': {u'brand_id': 10,

u'brand_name': u'Brand Ten',

u'categories': [11],

u'price': 713,

u'property': u'Five',

u'title': u'Product Eight Nine'}},

{u'_id': u'5',

u'_score': 1,

u'_source': {u'brand_id': 7,

u'brand_name': u'Brand Seven',

u'categories': [11, 12, 13],

u'price': 805,

u'property': u'Two',

u'title': u'Product Ten Three'}}],

'max_score': None,

'total': 10000},

'profile': None,

'timed_out': False,